ASTM D6299-23a

(Practice)Standard Practice for Applying Statistical Quality Assurance and Control Charting Techniques to Evaluate Analytical Measurement System Performance

Standard Practice for Applying Statistical Quality Assurance and Control Charting Techniques to Evaluate Analytical Measurement System Performance

SIGNIFICANCE AND USE

5.1 This practice may be used to continuously demonstrate the proficiency of analytical measurement systems that are used for establishing and ensuring the quality of petroleum and petroleum products.

5.2 Data accrued, using the techniques included in this practice, provide the ability to monitor analytical measurement system precision and bias.

5.3 These data are useful for updating test methods as well as for indicating areas of potential measurement system improvement.

5.4 Control chart statistics can be used to compute limits that the signed difference (Δ) between two single results for the same sample obtained under site precision conditions is expected to fall outside of about 5 % of the time, when each result is obtained using a different measurement system in the same laboratory executing the same test method, and both systems are in a state of statistical control.

SCOPE

1.1 This practice covers information for the design and operation of a program to monitor and control ongoing stability and precision and bias performance of selected analytical measurement systems using a collection of generally accepted statistical quality control (SQC) procedures and tools.

Note 1: A complete list of criteria for selecting measurement systems to which this practice should be applied and for determining the frequency at which it should be applied is beyond the scope of this practice. However, some factors to be considered include (1) frequency of use of the analytical measurement system, (2) criticality of the parameter being measured, (3) system stability and precision performance based on historical data, (4) business economics, and (5) regulatory, contractual, or test method requirements.

1.2 This practice is applicable to stable analytical measurement systems that produce results on a continuous numerical scale.

1.3 This practice is applicable to laboratory test methods.

1.4 This practice is applicable to validated process stream analyzers.

1.5 This practice is applicable to monitoring the differences between two analytical measurement systems that purport to measure the same property provided that both systems have been assessed in accordance with the statistical methodology in Practice D6708 and the appropriate bias applied.

Note 2: For validation of univariate process stream analyzers, see also Practice D3764.

Note 3: One or both of the analytical systems in 1.5 may be laboratory test methods or validated process stream analyzers.

1.6 This practice assumes that the normal (Gaussian) model is adequate for the description and prediction of measurement system behavior when it is in a state of statistical control.

Note 4: For non-Gaussian processes, transformations of test results may permit proper application of these tools. Consult a statistician for further guidance and information.

1.7 This international standard was developed in accordance with internationally recognized principles on standardization established in the Decision on Principles for the Development of International Standards, Guides and Recommendations issued by the World Trade Organization Technical Barriers to Trade (TBT) Committee.

General Information

- Status

- Published

- Publication Date

- 30-Nov-2023

- Technical Committee

- D02 - Petroleum Products, Liquid Fuels, and Lubricants

- Drafting Committee

- D02.94 - Coordinating Subcommittee on Quality Assurance and Statistics

Relations

- Effective Date

- 01-Dec-2023

- Effective Date

- 01-Mar-2024

- Effective Date

- 01-Mar-2024

- Refers

ASTM D4175-23a - Standard Terminology Relating to Petroleum Products, Liquid Fuels, and Lubricants - Effective Date

- 15-Dec-2023

- Effective Date

- 01-Dec-2023

- Effective Date

- 01-Jul-2023

- Refers

ASTM D4175-23e1 - Standard Terminology Relating to Petroleum Products, Liquid Fuels, and Lubricants - Effective Date

- 01-Jul-2023

- Effective Date

- 01-Apr-2022

- Effective Date

- 01-Apr-2022

- Referred By

ASTM D8428-21 - Standard Guide for Establishing Analyst Competence to Perform a Test Method - Effective Date

- 01-Dec-2023

- Effective Date

- 01-Dec-2023

- Effective Date

- 01-Dec-2023

- Effective Date

- 01-Dec-2023

- Effective Date

- 01-Dec-2023

- Effective Date

- 01-Dec-2023

Overview

ASTM D6299-23a, titled Standard Practice for Applying Statistical Quality Assurance and Control Charting Techniques to Evaluate Analytical Measurement System Performance, provides a thorough framework for enhancing and monitoring the quality of analytical measurement systems. Developed by ASTM International, this practice is primarily focused on laboratories and process analyzers involved in the measurement and quality control of petroleum and petroleum products.

This standard guides users in designing and managing programs using Statistical Quality Control (SQC) tools to ensure ongoing stability, precision, and accuracy in analytical testing. By implementing statistical methods such as control charting, laboratories can proactively detect issues, demonstrate measurement proficiency, and support continual improvement.

Key Topics

- Statistical Quality Control (SQC) Procedures: The standard emphasizes using SQC tools-especially control charts-to routinely assess and document measurement system behavior.

- Monitoring Precision and Bias: Data collected through these techniques enable ongoing surveillance of a system’s precision (repeatability and reproducibility) and bias (systematic error).

- Quality Control (QC) and Check Standards: It requires the use of stable, homogeneous QC samples and check standards to track performance over time.

- Control Chart Statistics: Guidance is provided on establishing appropriate control limits, which help determine when a measurement system is “in control” or needs intervention.

- Comparison of Measurement Systems: The practice facilitates comparing results between two different measurement systems for the same property, provided both have been properly assessed and bias-corrected.

- Assumptions: It is based on the measurement system producing stable results on a continuous numerical scale and presumes the data distribution is approximately normal (Gaussian). For non-Gaussian data, statistical consultation and transformation may be necessary.

Applications

ASTM D6299-23a delivers significant practical value for:

- Laboratory Quality Assurance: The practice is essential for labs performing routine analysis of petroleum and petroleum products, helping to ensure reliable and traceable results.

- Validated Process Stream Analyzers: It applies to both laboratory methods and online analyzers that require continual QC assessment.

- Regulatory and Contractual Compliance: Organizations can meet regulatory, business, and customer requirements by documenting and controlling analytical performance.

- Method Updates and Process Improvements: The data collected supports targeted method enhancements and process optimization by identifying areas for improvement.

- Inter-system Comparison: Laboratories that operate multiple measurement systems can use this standard to reliably compare their outputs-improving confidence in cross-system consistency.

Related Standards

For comprehensive laboratory quality management and statistical validation, ASTM D6299-23a references and complements several other internationally recognized standards, including:

- ASTM D6708: Statistical assessment of agreement between two test methods for the same property.

- ASTM D3764: Validation performance of process stream analyzer systems.

- ASTM D6300: Determination of precision and bias data for petroleum test methods.

- ASTM D6617: Laboratory bias detection using standard materials.

- ASTM D6792: Quality management systems for testing laboratories.

- ASTM D7372: Analysis and interpretation of proficiency test program results.

- ASTM E177/E178: Use of terms and dealing with outlying observations in ASTM test methods.

- ISO Standards: Where applicable, the practice is compatible with ISO approaches for laboratory quality and measurement assurance.

By following ASTM D6299-23a, laboratories and process facilities maintain a robust statistical approach to analytical quality assurance, supporting regulatory compliance, improved measurement confidence, and proactive system improvement in the petroleum industry.

Buy Documents

ASTM D6299-23a - Standard Practice for Applying Statistical Quality Assurance and Control Charting Techniques to Evaluate Analytical Measurement System Performance

REDLINE ASTM D6299-23a - Standard Practice for Applying Statistical Quality Assurance and Control Charting Techniques to Evaluate Analytical Measurement System Performance

Get Certified

Connect with accredited certification bodies for this standard

BSI Group

BSI (British Standards Institution) is the business standards company that helps organizations make excellence a habit.

Bureau Veritas

Bureau Veritas is a world leader in laboratory testing, inspection and certification services.

DNV

DNV is an independent assurance and risk management provider.

Sponsored listings

Frequently Asked Questions

ASTM D6299-23a is a standard published by ASTM International. Its full title is "Standard Practice for Applying Statistical Quality Assurance and Control Charting Techniques to Evaluate Analytical Measurement System Performance". This standard covers: SIGNIFICANCE AND USE 5.1 This practice may be used to continuously demonstrate the proficiency of analytical measurement systems that are used for establishing and ensuring the quality of petroleum and petroleum products. 5.2 Data accrued, using the techniques included in this practice, provide the ability to monitor analytical measurement system precision and bias. 5.3 These data are useful for updating test methods as well as for indicating areas of potential measurement system improvement. 5.4 Control chart statistics can be used to compute limits that the signed difference (Δ) between two single results for the same sample obtained under site precision conditions is expected to fall outside of about 5 % of the time, when each result is obtained using a different measurement system in the same laboratory executing the same test method, and both systems are in a state of statistical control. SCOPE 1.1 This practice covers information for the design and operation of a program to monitor and control ongoing stability and precision and bias performance of selected analytical measurement systems using a collection of generally accepted statistical quality control (SQC) procedures and tools. Note 1: A complete list of criteria for selecting measurement systems to which this practice should be applied and for determining the frequency at which it should be applied is beyond the scope of this practice. However, some factors to be considered include (1) frequency of use of the analytical measurement system, (2) criticality of the parameter being measured, (3) system stability and precision performance based on historical data, (4) business economics, and (5) regulatory, contractual, or test method requirements. 1.2 This practice is applicable to stable analytical measurement systems that produce results on a continuous numerical scale. 1.3 This practice is applicable to laboratory test methods. 1.4 This practice is applicable to validated process stream analyzers. 1.5 This practice is applicable to monitoring the differences between two analytical measurement systems that purport to measure the same property provided that both systems have been assessed in accordance with the statistical methodology in Practice D6708 and the appropriate bias applied. Note 2: For validation of univariate process stream analyzers, see also Practice D3764. Note 3: One or both of the analytical systems in 1.5 may be laboratory test methods or validated process stream analyzers. 1.6 This practice assumes that the normal (Gaussian) model is adequate for the description and prediction of measurement system behavior when it is in a state of statistical control. Note 4: For non-Gaussian processes, transformations of test results may permit proper application of these tools. Consult a statistician for further guidance and information. 1.7 This international standard was developed in accordance with internationally recognized principles on standardization established in the Decision on Principles for the Development of International Standards, Guides and Recommendations issued by the World Trade Organization Technical Barriers to Trade (TBT) Committee.

SIGNIFICANCE AND USE 5.1 This practice may be used to continuously demonstrate the proficiency of analytical measurement systems that are used for establishing and ensuring the quality of petroleum and petroleum products. 5.2 Data accrued, using the techniques included in this practice, provide the ability to monitor analytical measurement system precision and bias. 5.3 These data are useful for updating test methods as well as for indicating areas of potential measurement system improvement. 5.4 Control chart statistics can be used to compute limits that the signed difference (Δ) between two single results for the same sample obtained under site precision conditions is expected to fall outside of about 5 % of the time, when each result is obtained using a different measurement system in the same laboratory executing the same test method, and both systems are in a state of statistical control. SCOPE 1.1 This practice covers information for the design and operation of a program to monitor and control ongoing stability and precision and bias performance of selected analytical measurement systems using a collection of generally accepted statistical quality control (SQC) procedures and tools. Note 1: A complete list of criteria for selecting measurement systems to which this practice should be applied and for determining the frequency at which it should be applied is beyond the scope of this practice. However, some factors to be considered include (1) frequency of use of the analytical measurement system, (2) criticality of the parameter being measured, (3) system stability and precision performance based on historical data, (4) business economics, and (5) regulatory, contractual, or test method requirements. 1.2 This practice is applicable to stable analytical measurement systems that produce results on a continuous numerical scale. 1.3 This practice is applicable to laboratory test methods. 1.4 This practice is applicable to validated process stream analyzers. 1.5 This practice is applicable to monitoring the differences between two analytical measurement systems that purport to measure the same property provided that both systems have been assessed in accordance with the statistical methodology in Practice D6708 and the appropriate bias applied. Note 2: For validation of univariate process stream analyzers, see also Practice D3764. Note 3: One or both of the analytical systems in 1.5 may be laboratory test methods or validated process stream analyzers. 1.6 This practice assumes that the normal (Gaussian) model is adequate for the description and prediction of measurement system behavior when it is in a state of statistical control. Note 4: For non-Gaussian processes, transformations of test results may permit proper application of these tools. Consult a statistician for further guidance and information. 1.7 This international standard was developed in accordance with internationally recognized principles on standardization established in the Decision on Principles for the Development of International Standards, Guides and Recommendations issued by the World Trade Organization Technical Barriers to Trade (TBT) Committee.

ASTM D6299-23a is classified under the following ICS (International Classification for Standards) categories: 03.120.30 - Application of statistical methods. The ICS classification helps identify the subject area and facilitates finding related standards.

ASTM D6299-23a has the following relationships with other standards: It is inter standard links to ASTM D6299-23e1, ASTM D6708-24, ASTM D6300-24, ASTM D4175-23a, ASTM D6300-23a, ASTM D6300-23, ASTM D4175-23e1, ASTM E456-13a(2022)e1, ASTM E456-13a(2022), ASTM D8428-21, ASTM D874-23, ASTM D3120-08(2019), ASTM D6890-22, ASTM D8001-16e1, ASTM D7318-19e1. Understanding these relationships helps ensure you are using the most current and applicable version of the standard.

ASTM D6299-23a is available in PDF format for immediate download after purchase. The document can be added to your cart and obtained through the secure checkout process. Digital delivery ensures instant access to the complete standard document.

Standards Content (Sample)

This international standard was developed in accordance with internationally recognized principles on standardization established in the Decision on Principles for the

Development of International Standards, Guides and Recommendations issued by the World Trade Organization Technical Barriers to Trade (TBT) Committee.

Designation: D6299 − 23a An American National Standard

Standard Practice for

Applying Statistical Quality Assurance and Control Charting

Techniques to Evaluate Analytical Measurement System

Performance

This standard is issued under the fixed designation D6299; the number immediately following the designation indicates the year of

original adoption or, in the case of revision, the year of last revision. A number in parentheses indicates the year of last reapproval. A

superscript epsilon (´) indicates an editorial change since the last revision or reapproval.

NOTE 4—For non-Gaussian processes, transformations of test results

1. Scope*

may permit proper application of these tools. Consult a statistician for

1.1 This practice covers information for the design and

further guidance and information.

operation of a program to monitor and control ongoing stability

1.7 This international standard was developed in accor-

and precision and bias performance of selected analytical

dance with internationally recognized principles on standard-

measurement systems using a collection of generally accepted

ization established in the Decision on Principles for the

statistical quality control (SQC) procedures and tools.

Development of International Standards, Guides and Recom-

mendations issued by the World Trade Organization Technical

NOTE 1—A complete list of criteria for selecting measurement systems

to which this practice should be applied and for determining the frequency

Barriers to Trade (TBT) Committee.

at which it should be applied is beyond the scope of this practice.

However, some factors to be considered include (1) frequency of use of

2. Referenced Documents

the analytical measurement system, (2) criticality of the parameter being

2.1 ASTM Standards:

measured, (3) system stability and precision performance based on

historical data, (4) business economics, and (5) regulatory, contractual, or

D3764 Practice for Validation of the Performance of Process

test method requirements.

Stream Analyzer Systems

1.2 This practice is applicable to stable analytical measure- D4175 Terminology Relating to Petroleum Products, Liquid

ment systems that produce results on a continuous numerical Fuels, and Lubricants

D5191 Test Method for Vapor Pressure of Petroleum Prod-

scale.

ucts and Liquid Fuels (Mini Method)

1.3 This practice is applicable to laboratory test methods.

D6300 Practice for Determination of Precision and Bias

1.4 This practice is applicable to validated process stream

Data for Use in Test Methods for Petroleum Products,

analyzers.

Liquid Fuels, and Lubricants

1.5 This practice is applicable to monitoring the differences D6617 Practice for Laboratory Bias Detection Using Single

Test Result from Standard Material

between two analytical measurement systems that purport to

measure the same property provided that both systems have D6708 Practice for Statistical Assessment and Improvement

of Expected Agreement Between Two Test Methods that

been assessed in accordance with the statistical methodology in

Practice D6708 and the appropriate bias applied. Purport to Measure the Same Property of a Material

NOTE 2—For validation of univariate process stream analyzers, see also D6792 Practice for Quality Management Systems in Petro-

Practice D3764.

leum Products, Liquid Fuels, and Lubricants Testing

NOTE 3—One or both of the analytical systems in 1.5 may be laboratory

Laboratories

test methods or validated process stream analyzers.

D7372 Guide for Analysis and Interpretation of Proficiency

1.6 This practice assumes that the normal (Gaussian) model

Test Program Results

is adequate for the description and prediction of measurement

D7915 Practice for Application of Generalized Extreme

system behavior when it is in a state of statistical control.

Studentized Deviate (GESD) Technique to Simultane-

ously Identify Multiple Outliers in a Data Set

E177 Practice for Use of the Terms Precision and Bias in

ASTM Test Methods

E178 Practice for Dealing With Outlying Observations

This practice is under the jurisdiction of ASTM Committee D02 on Petroleum

Products, Liquid Fuels, and Lubricants and is the direct responsibility of Subcom-

mittee D02.94 on Coordinating Subcommittee on Quality Assurance and Statistics. For referenced ASTM standards, visit the ASTM website, www.astm.org, or

Current edition approved Dec. 1, 2023. Published December 2023. Originally contact ASTM Customer Service at service@astm.org. For Annual Book of ASTM

ɛ1

approved in 1998. Last previous edition approved in 2023 as D6299 – 23 . DOI: Standards volume information, refer to the standard’s Document Summary page on

10.1520/D6299-23A. the ASTM website.

*A Summary of Changes section appears at the end of this standard

Copyright © ASTM International, 100 Barr Harbor Drive, PO Box C700, West Conshohocken, PA 19428-2959. United States

D6299 − 23a

E456 Terminology Relating to Quality and Statistics results from multiple individual analytical systems or different

E691 Practice for Conducting an Interlaboratory Study to instruments executing the same test method.

Determine the Precision of a Test Method

3.2.5 assignable cause, n—a factor that contributes to varia-

tion and that is feasible to detect and identify.

3. Terminology

3.2.6 bias, n—a systematic error that contributes to the

3.1 Definitions:

difference between a population mean of the measurements or

3.1.1 More extensive lists of terms related to quality and

test results and an accepted reference or true value.

statistics are found in Terminology D4175, Practice D6300,

3.2.7 blind submission, n—submission of a check standard

and Terminology E456.

or quality control (QC) sample for analysis without revealing

3.1.2 repeatability conditions, n—conditions where inde-

the expected value to the person performing the analysis.

pendent test results are obtained with the same method on

identical test items in the same laboratory by the same operator 3.2.8 check standard, n—in QC testing, a material having an

using the same equipment within short intervals of time. accepted reference value used to determine the accuracy of a

D6300 measurement system.

3.2.8.1 Discussion—A check standard is preferably a mate-

3.1.3 reproducibility (R), n—a quantitative expression for

rial that is either a certified reference material with traceability

the random error associated with the difference between two

to a nationally recognized body or a material that has an

independent results obtained under reproducibility conditions

accepted reference value established through interlaboratory

that would be exceeded with an approximate probability of 5 %

testing. For some measurement systems, a pure, single com-

(one case in 20 in the long run) in the normal and correct

ponent material having known value or a simple gravimetric or

operation of the test method. D6300

volumetric mixture of pure components having calculable

3.1.4 reproducibility conditions, n—conditions where inde-

value may serve as a check standard. Users should be aware

pendent test results are obtained with the same method on

that for measurement systems that show matrix dependencies,

identical test items in different laboratories with different

accuracy determined from pure compounds or simple mixtures

operators using different equipment.

may not be representative of that achieved on actual samples.

3.1.4.1 Discussion—Different laboratory by necessity

3.2.9 common (chance, random) cause, n—for quality as-

means a different operator, different equipment, and different

surance programs, one of generally numerous factors, individu-

location and under different supervisory control. D6300

ally of relatively small importance, that contributes to

3.2 Definitions of Terms Specific to This Standard:

variation, and that is not feasible to detect and identify.

3.2.1 More extensive lists of terms related to quality and

3.2.10 control limits, n—limits on a control chart that are

statistics are found in Terminology D4175, Practice D6300,

used as criteria for signaling the need for action or for judging

and Terminology E456.

whether a set of data does or does not indicate a state of

3.2.2 accepted reference value, n—a value that serves as an

statistical control.

agreed-upon reference for comparison and that is derived as (1)

3.2.11 double blind submission, n—submission of a check

a theoretical or established value, based on scientific principles,

standard or QC sample for analysis without revealing the check

(2) an assigned value, based on experimental work of some

standard or QC sample status and expected value to the person

national or international organization, such as the U.S. Na-

performing the analysis.

tional Institute of Standards and Technology (NIST), or (3) a

consensus value, based on collaborative experimental work

3.2.12 in-statistical-control, adj—a process, analytical mea-

under the auspices of a scientific or engineering group.

surement system, or function that exhibits variations that can

only be attributable to common cause.

3.2.3 accuracy, n—the closeness of agreement between an

observed value and an accepted reference value.

3.2.13 lot, n—a definite quantity of a product or material

3.2.4 analytical measurement system, n—a collection of one accumulated under conditions that are considered uniform for

or more components or subsystems, such as samplers, test sampling purposes.

equipment, instrumentation, display devices, data handlers,

3.2.14 out-of-statistical-control, adj—a process, analytical

printouts or output transmitters, that is used to determine a

measurement system, or function that exhibits variations in

quantitative value of a specific property for an unknown

addition to those that can be attributable to common cause and

sample in accordance with a test method.

the magnitude of these additional variations exceed specified

3.2.4.1 Discussion—A standard test method (for example,

limits.

ASTM, ISO) executed at a single site using a specific instru-

ment is an example of an analytical measurement system. 3.2.14.1 Discussion—For clarification, a transition from an

in-statistical-control system to an out-of-statistical-control sys-

3.2.4.2 Discussion—The control chart methodology and

tem does not automatically imply that there is a change in the

work processes described in this practice are intended to be

fit for use status of the system in terms of meeting the

applied independently to the final results produced from each

requirements for the intended application.

individual measurement system, or, differences between results

from two individual measurement systems for the same test 3.2.15 precision, n—the closeness of agreement between

sample. They are not intended to be applied to combined final test results obtained under prescribed conditions.

D6299 − 23a

3.2.16 proficiency testing, n—determination of a laborato- similar in composition and property level to the QC samples

ry’s testing capability by participation in an interlaboratory used to establish the standard deviation.

crosscheck program.

3.2.22 upper (UAL) and lower agreement limit (LAL),

3.2.16.1 Discussion—ASTM Committee D02 conducts pro-

n—the numerical limits that the signed difference (∆) between

ficiency testing among hundreds of laboratories, using a wide

two single test results, each obtained under site precision

variety of petroleum products and lubricants.

conditions from a different analytical system located in the

same laboratory executing the same test method on the same

3.2.17 quality control (QC) sample, n—for use in quality

sample, is expected to fall outside about 5 % of the time, when

assurance programs to determine and monitor the precision and

both systems are in a state of statistical control per this

stability of a measurement system, a stable and homogeneous

practice.

material having physical or chemical properties, or both,

similar to those of typical samples tested by the analytical

3.2.22.1 Discussion—The limits are calculated using the

measurement system; the material is properly stored to ensure

most current control chart statistics from each system for the

sample integrity, and is available in sufficient quantity for

same QC material.

repeated, long term testing.

3.2.22.2 Discussion—The calculation methodology assumes

that the standard deviation (σ ) for the control chart QC

R’

3.2.18 system expected value (SEV), n—for a QC sample

material can be extrapolated to the test sample.

this is an estimate of the theoretical limiting value towards

3.2.22.3 Discussion—Since the uncertainty for the SEV

which the average of results collected from a single in-

estimate of each system is based on many measurements, it is

statistical-control measurement system under site precision

expected to be small relative to ∆, hence, it is not included in

conditions tends as the number of results approaches infinity.

the calculation of the limits.

3.2.18.1 Discussion—The SEV is associated with a single

3.2.23 validation audit sample, n—a QC sample or check

measurement system; for control charts that are plotted in

standard used to verify precision and bias estimated from

actual measured units, the SEV is required, since it is used as

routine quality assurance testing.

a reference value from which upper and lower control limits for

3.3 Symbols:

the control chart specific to a batch of QC material are

constructed. 3.3.1 ARV—accepted reference value.

3.3.2 ∆—signed difference between two single test results.

3.2.19 site precision (R'), n—for a single analytical mea-

surement system (see 3.2.4), the value which the absolute

3.3.3 EWMA—exponentially weighted moving average.

difference between two individual test results obtained under

3.3.4 I—individual observation (as in I-chart).

site precision conditions is expected to exceed about 5 % of the

3.3.5 MR—moving range.

time (one case in 20 in the long run) in the normal and correct

¯

3.3.6 MR—average of moving range.

operation of the test method.

3.2.19.1 Discussion—It is defined as 2.77 times σ , the 3.3.7 LAL—lower agreement limit.

R'

standard deviation of results obtained under site precision

3.3.8 QC—quality control.

conditions.

3.3.9 R'—site precision.

3.2.20 site precision conditions, n—for a single analytical

3.3.10 SEV—system expected value.

measurement system (see 3.2.4), conditions under which test

3.3.11 σ —site precision standard deviation.

R'

results are obtained by one or more operators in a single site

3.3.12 UAL—upper agreement limit.

location practicing the same test method on a single measure-

ment system using test specimens taken at random from the

3.3.13 VA—validation audit.

same sample of material, over an extended period of time 2

3.3.14 χ —chi squared.

spanning at least a 20 day interval.

3.3.15 λ—lambda.

3.2.20.1 Discussion—Site precision conditions should in-

clude all sources of variation that are typically encountered

4. Summary of Practice

during normal, long term operation of the measurement sys-

4.1 QC samples and check standards are regularly analyzed

tem. Thus, all operators who are involved in the routine use of

by the measurement system. Control charts and other statistical

the measurement system should contribute results to the site

techniques are presented to screen, plot, and interpret test

precision determination. In situations of high usage of a test

results in accordance with industry-accepted practices to as-

method where multiple QC results are obtained within a 24 h

certain the in-statistical-control status of the measurement

period, then only results separated by at least 4 h to 8 h,

system.

depending on the absence of auto-correlation in the data, the

nature of the test method/instrument, site requirements, or

4.2 Statistical estimates of the measurement system preci-

regulations, should be used in site precision calculations to

sion and bias are calculated and periodically updated using

reflect the longer term variation in the system.

accrued data.

3.2.21 site precision standard deviation, n—the standard 4.3 In addition, as part of a separate validation audit

deviation of results obtained under site precision conditions for procedure, QC samples and check standards may be submitted

an individual measurement system and materials that are blind or double-blind and randomly to the measurement system

D6299 − 23a

for routine testing to verify that the calculated precision and each with a quantity sufficient to conduct the analysis.

bias are representative of routine measurement system perfor- Similarly, samples prone to oxidation may benefit from split-

mance when there is no prior knowledge of the expected value ting the bulk sample into smaller containers that can be

or sample status. blanketed with an inert gas prior to being sealed and leaving

them sealed until the sample is needed.)

5. Significance and Use

6.2 Check standards are used to validate the accuracy of the

5.1 This practice may be used to continuously demonstrate analytical measurement system.

the proficiency of analytical measurement systems that are

6.2.1 A check standard may be a commercial standard

used for establishing and ensuring the quality of petroleum and reference material when such material is available in appropri-

petroleum products.

ate quantity, quality and composition.

5.2 Data accrued, using the techniques included in this

NOTE 6—Commercial reference material of appropriate composition

practice, provide the ability to monitor analytical measurement may not be available for all measurement systems.

system precision and bias.

6.2.2 Samples circulated as part of an interlaboratory testing

program may be used as check standards. For the average

5.3 These data are useful for updating test methods as well

computed from an interlaboratory testing sample to be usable

as for indicating areas of potential measurement system im-

as the Accepted Reference Value (ARV) of a check standard,

provement.

the standard deviation computed from at least 16 non-rejected

5.4 Control chart statistics can be used to compute limits

normally distributed results (single submission per participant)

that the signed difference (∆) between two single results for the

shall not be statistically greater than the reproducibility stan-

same sample obtained under site precision conditions is ex-

dard deviation for the test method. An F-test (0.05 sig.) shall be

pected to fall outside of about 5 % of the time, when each result

applied to test acceptability.

is obtained using a different measurement system in the same

NOTE 7—The uncertainty in the ARV is inversely proportional to the

laboratory executing the same test method, and both systems

square root of the number of values in the average. For example, use of 16

are in a state of statistical control.

non-outlier results in calculating the ARV reduces the uncertainty of the

ARV by a factor of 4 relative to the single result precision. The bias tests

6. Reference Materials

described in this practice assume that the uncertainty in the ARV is

negligible relative to the precision of the measurement system being

6.1 QC samples are used to establish and monitor the

evaluated. If less than 16 values are used in calculating the average, this

precision of the analytical measurement system.

assumption may not be valid. It is also assumed that the property of

6.1.1 Select a stable and homogeneous material having

interest of the check standard is stable over the period of its intended use,

physical or chemical properties, or both, similar to those of

and stored in a manner meeting the requirement of 3.2.17 quality control

(QC) sample.

typical samples tested by the analytical measurement system.

NOTE 8—Examples of exchanges that may be acceptable are ASTM

NOTE 5—When the QC sample is to be utilized for monitoring a process

D02.92 Proficiency Test Program; ASTM D02.01 N.E.G.; ASTM

stream analyzer performance, it is often helpful to supplement the process

D02.01.A Regional Exchanges; International Quality Assurance Exchange

analyzer system with a subsystem to automate the extraction, mixing,

Program, administered by Innotech ALBERTA.

storage, and delivery functions associated with the QC sample.

6.2.3 For some measurement systems, single, pure compo-

6.1.2 Estimate the quantity of the material needed for each

nent materials with known value, or simple gravimetric or

specific lot of QC sample to (1) accommodate the number of

volumetric mixtures of pure components having calculable

analytical measurement systems for which it is to be used

value may serve as a check standard. For example, pure

(laboratory test apparatuses as well as process stream analyzer

solvents, such as 2,2-dimethylbutane, are used as check stan-

systems) and (2) provide determination of QC statistics for a

dards for the measurement of Reid vapor pressure by Test

useful and desirable period of time.

Method D5191. Users should be aware that for measurement

6.1.3 Collect the material into a single container and isolate

systems that show matrix dependencies, accuracy determined

it.

from pure compounds or simple mixtures may not be repre-

6.1.4 Thoroughly mix the material to ensure homogeneity.

sentative of that achieved on actual samples.

6.1.5 Conduct any testing necessary to ensure that the QC

6.3 Validation audit (VA) samples are QC samples and

sample meets the characteristics for its intended use.

check standards, which may, at the option of the users, be

6.1.6 Package or store QC samples, or both, as appropriate

submitted to the measurement system in a blind, or double

for the specific analytical measurement system to ensure that

blind, and random fashion to verify precision and bias esti-

all analyses of samples from a given lot are performed on

mated from routine quality assurance testing.

essentially identical material. If necessary, split the bulk

material collected in 6.1.3 into separate and smaller containers

7. Quality Assurance (QA) Program for Individual

to help ensure integrity over time. (Warning—Treat the

Measurement Systems

material appropriately to ensure its stability, integrity, and

7.1 Overview—A QA program (1) may consist of five

homogeneity over the time period for which it is to stored and

primary activities: (1) monitoring stability and precision

used. For samples that are volatile, such as gasoline, storage in

one large container that is repeatedly opened and closed may

result in loss of light ends. This problem can be avoided by

The boldface numbers in parentheses refer to the list of references at the end of

chilling and splitting the bulk sample into smaller containers, this standard.

D6299 − 23a

through QC sample testing, (2) monitoring accuracy, (3) 7.4.4 Establish a protocol for testing so that all persons who

periodic evaluation of system performance in terms of preci- routinely operate the system participate in generating QC test

sion or bias, or both, (4) proficiency testing through participa- data.

tion in interlaboratory exchange programs where such pro-

7.4.5 Handle and test the QC and check standard samples in

grams are available, and (5) a periodic and independent system

the same manner and under the same conditions as samples or

validation using VA samples may be conducted to provide

materials routinely analyzed by the analytical measurement

additional assurance of the system precision and bias metrics

system.

established from the primary testing activities. At minimum,

7.4.6 When practical, randomize the time of check standard

the QA program must include at least item one and item two,

and additional QC sample testing over the normal hours of

subject to check standard availability (see 7.1.1).

measurement system operation, unless otherwise prescribed in

7.1.1 For some measurement systems, suitable check stan-

the specific test method.

dard materials may not exist, and there may be no reasonably

NOTE 13—Avoid special treatment of QC samples designed to get a

available exchange programs to generate them. For such

better result. Special treatment seriously undermines the integrity of

systems, there is no means of verifying the accuracy of the

precision estimates.

system, and the QA program will only involve monitoring

stability and precision through QC sample testing. 7.5 Evaluation of System Performance in Terms of Precision

and Bias:

NOTE 9—For guidance on the establishment and maintenance of the

7.5.1 Pretreat and screen results accumulated from QC and

essentials of a quality system, see Practice D6792.

check standard testing. Apply statistical techniques to the

NOTE 10—For guidance on the analysis and interpretation of profi-

ciency test (PT) program results, see Guide D7372. pretreated data to identify erroneous data. Plot appropriately

pretreated data on control charts.

7.2 Monitoring System Stability and Precision Through QC

7.5.2 Periodically analyze results from control charts, ex-

Sample Testing—QC test specimen samples from a specific lot

cluding those data points with assignable causes, to quantify

are introduced and tested in the analytical measurement system

the bias and precision estimates for the measurement system.

on a regular basis to establish system performance history in

terms of both stability and precision.

7.6 Proficiency Testing:

7.6.1 Participation in regularly conducted interlaboratory

7.3 Monitoring Accuracy:

exchanges where typical production samples are tested by

7.3.1 Check standards may be tested in the analytical

multiple measurement systems, using a specified (ASTM) test

measurement system on a regular basis to establish system

protocol, provide a cost-effective means of assessing measure-

performance history in terms of accuracy.

ment system accuracy relative to average industry perfor-

7.4 Test Program Conditions/Frequency:

mance. Such proficiency testing may be used instead of check

7.4.1 Conduct both QC sample and check standard testing

standard testing for systems where the timeliness of the

under site precision conditions.

accuracy check is not critical. Proficiency testing may be used

as a supplement to accuracy monitoring by way of check

NOTE 11—It is inappropriate to use test data collected under repeat-

standard testing.

ability conditions to estimate the long term precision achievable by the site

because the majority of the long term measurement system variance is due

7.6.2 Participants plot their signed deviations or statistics

to common cause variations associated with the combination of time,

from the consensus values (exchange averages) on control

operator, reagents, instrumentation calibration factors, and so forth, which

charts in the same fashion described below for check standards,

would not be observable in data obtained under repeatability conditions.

to ascertain if their measurement processes are non-biased

7.4.2 Test the QC and check standard samples on a regular

relative to industry average.

schedule, as appropriate. Principal factors to be considered for

7.7 Independent System Validation—Periodically, at the dis-

determining the frequency of testing are (1) frequency of use of

cretion of users, VA samples may be submitted blind or double

the analytical measurement system, (2) criticality of the pa-

blind for analysis. Precision and bias estimates calculated using

rameter being measured, (3) established system stability and

VA samples test data may be used as an independent validation

precision performance based on historical data, (4) business

of the routine QA program performance statistics.

economics, and (5) regulatory, contractual, or test method

requirements.

NOTE 14—For measurement systems susceptible to human influence,

the precision and bias estimates calculated from data where the analyst is

NOTE 12—At the discretion of the laboratory, check standards may be

aware of the sample status (QC or check standard) or expected values, or

used as QC samples. In this case, the results for the check standards may

both, may underestimate the precision and bias achievable under routine

be used to monitor both stability (see 7.2) and accuracy (see 7.3)

operation. At the discretion of the users, and depending on the criticality

simultaneously. If check standards are expensive, or not available in

of these measurement systems, the QA program may include periodic

sufficient quantity, then separate QC samples are employed. In this case,

blind or double-blind testing of VA samples.

the accuracy (see 7.3) is monitored less frequently, and the QC sample

testing (see 7.2) is used to demonstrate the stability of the measurement

7.7.1 The specific design and approach to the VA testing

system between accuracy tests.

program will depend on features specific to the measurement

7.4.3 It is recommended that a QC sample be analyzed at the system and organizational requirements, and is beyond the

beginning of any set of measurements and immediately after a intended scope of this practice. Some possible approaches are

change is made to the measurement system. noted as follows.

D6299 − 23a

7.7.1.1 If all QC samples or check standards, or both, are Pretreated result5 (2)

submitted blind or double blind and the results are promptly

@test result 2 check standard ARV#/sqrt @~standard error of ARV! 1

evaluated, then additional VA sample testing may not be

necessary.

~std dev of site test method at the ARV level! #

7.7.1.2 QC samples or check standards, or both, may be

where the standard error of the ARV is the uncertainty asso-

submitted as unknown samples at a specific frequency. Such

ciated with the ARV as supplied by the check standard sup-

plier; the standard deviation of site test method at the ARV

submissions should not be so regular as to compromise their

level is the established standard deviation of the site’s test

blind status.

method under site precision conditions at nominally the ARV

7.7.1.3 Retains of previously analyzed samples may be

level. In the event the ARV was established through interla-

resubmitted as unknown samples under site precision condi-

boratory testing program, standard deviations determined

tions. Generally, data from this approach may only yield from outlier-free and normally distributed round robin test

results may be used to calculate the standard error of the

precision estimates as retain samples do not have ARVs.

ARV in accordance with statistical theory. (See Note 16.)

Typically, the differences between the replicate analyses are

plotted on control charts to estimate the precision of the

8.2.2.3 If the ARV was not arrived at by interlaboratory

measurement system. If precision is level dependent, the

testing, a standard error of the ARV should be determined by

differences are scaled by the standard deviation of the mea-

users in a technically acceptable manner.

surement system precision at the level of the average of the two

NOTE 16—It is recommended that the method used to determine the

results.

standard error of the ARV be developed under the guidance of a

statistician.

8. Procedure for Pretreatment, Assessment, and

8.2.3 Pretreatment of results for VA samples is done in the

Interpretation of Test Results

same manner as described in 8.2.1 and 8.2.2.

8.1 Overview—Results accumulated from QC, check

8.3 Control Charts (1, 2)—Individual (I), moving range of

standard, and VA sample testing are pretreated and screened.

two (MR) control charts, and either Strategy 1 (additional run

Statistical techniques are applied to the pretreated data to

rules) (3) or Strategy 2 (EWMA) (4, 5, 6) are prescribed

achieve the following objectives:

techniques for (a) routine recording of QC sample and check

8.1.1 Identify erroneous data (outliers).

standard test results, and (b) immediate assessment of the “in

8.1.2 Assess initial results to validate system stability and

statistical control” (7) status of the system that generated the

assumptions associated with use of control chart technique (for

data. The I chart is intended to detect occurrence of a sudden,

example, dataset normality, adequacy of variations in the

unique event that causes a large deviation from the expected

dataset relative to measurement resolution).

value for the QC material. Strategy 1 (additional Run Rules) or

8.1.3 Deploy, interpret, and maintain control charts.

Strategy 2 (EWMA) is intended to detect small levels of

8.1.4 Quantify long term measurement precision and bias. sustained shifts or drifts of the complete analytical system. MR

chart is intended to detect changes in the analytical system

NOTE 15—Refer to the annex for examples of the application of the

overall variability.

techniques that are discussed below and described in Section 9.

NOTE 17—The control charts and statistical techniques described in this

8.2 Pretreatment of Test Results—The purpose of pretreat-

practice are chosen for their simplicity and ease of use. It is not the intent

ment is to standardize the control chart scales so as to allow for

of this practice to preclude use of other statistically equivalent or more

data from multiple check standards or different batches of QC

advanced techniques, or both.

materials with different property levels to be plotted on the

8.3.1 Control charting may be viewed as a two-staged work

same chart.

process where:

8.2.1 For QC sample test results, no data pretreatment is

Stage 1 comprises assessment of initial test results (for a

necessary if results for different QC samples are plotted in

new batch of QC material) and construction of the control chart

actual measurement units on different control charts.

with graphically represented assessed results and statistical

8.2.2 For check standard sample test results that are to be

values that describes the location of where future test results

plotted on the same control chart, two cases apply, depending

for this QC material from the measurement systems are

on the measurement system precision:

expected to fall within, on the assumption that the measure-

ment system and QC material remains unchanged.

8.2.2.1 Case 1—If either (1) all of the check standard test

Stage 2 comprises regular assessment of future test results

results are from one or more lots of check standard material

(for the QC material) as they arrive in chronological order

having the same ARV(s), or (2) the precision of the measure-

against the established expectations in Stage 1; as well as a

ment system is constant across levels, then pretreatment

periodic reevaluation of the expectation statistics of all accrued

consists of calculating the difference between the test result and

results to update the expectations statistics established from

the ARV:

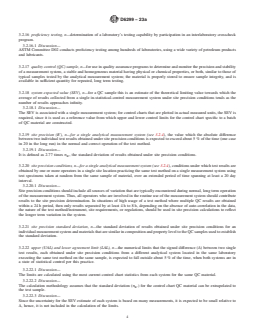

Stage 1, if necessary. See Fig. 1.

Pretreated result 5 test result 2 ARV for the sample (1)

~ !

STAGE 1—Assessment and Chart Construction

8.2.2.2 Case 2—Test results are for multiple lots of check

standards with different ARVs, and the precision of the 8.4 Assessment of Initial Results—Assessment techniques

measurement system is known to vary with level, are applied to test results collected during the initial startup

D6299 − 23a

FIG. 1 Control Chart Work Process Block Diagram

phase of or after significant modifications to a measurement 8.4.3 Test “Normality” Assumption, Independence of Test

system (see Note 19). Perform the following assessment after

Results, and Adequacy of Measurement Resolution—For mea-

at least 20 results (pretreated if appropriate) have become surement systems with no prior performance history, or as a

available. The purpose of this assessment is to ensure that these

diagnostic tool for initial data collected on a new batch of QC

results are suitable for deployment of control charts (described

material, it is useful to test that the results from the measure-

in A1.4).

ment system are reasonably independent, with adequate mea-

surement resolution, and may be adequately modelled by a

NOTE 18—These techniques may also be applied as diagnostic tools to

normal distribution. One way to do this is to use a normal

investigate out-of-control situations.

NOTE 19—During the data collection phase in Stage 1, users may

probability plot and the Anderson-Darling Statistic (see A1.4).

deploy the procedures described in 8.7.2.3 or 8.7.3 (Q–procedure) or 8.7.4

If the results show obvious deviation from normality or

to monitor measurement process performance.

obvious measurement resolution inadequacy (see A1.4), follow

8.4.1 Screen for Suspicious Results—Results (pretreated if

the guidance in A1.4.2.6, Case 2.

appropriate) should first be visually screened for values that are

NOTE 20—Transformations may lead to normally distributed data, but

inconsistent with the remainder of the data set, such as those

these techniques are outside the scope of this practice.

that could have been caused by transcription errors, followed

by an outlier assessment using GESD (see Practice D7915) or

8.4.4 Construction of Control Charts—If no obvious un-

other equivalent statistical technique. Those flagged as suspi-

usual patterns are detected from the run charts, and no obvious

cious should be investigated. Discarding data at this stage must

deviation from normality is detected, proceed with construc-

be supported by evidence gathered from the investigation. If,

tion of the control charts as follows (see A1.5.1 – A1.5.3):

after discarding suspicious pretreated results there are less than

8.4.4.1 I Chart—Calculate the center line, control limits and

15 values remaining, collect additional data and start over.

overlay them on the “run chart” to produce the I chart.

8.4.2 Screen for Unusual Patterns—The next step is to

8.4.4.2 Construct an MR plot and examine it for unusual

examine the results (pretreated if appropriate) for non-random

patterns. If no unusual patterns are found in the MR plot,

patterns such as continuous trending in either direction, un-

calculate and overlay the center line and control limits on the

usual clustering, and cycles. One way to do this is to plot the

MR plot to complete the MR chart.

results on a run chart (see A1.3) and examine the plot. If any

non-random pattern is detected, investigate for and eliminate 8.4.4.3 EWMA Overlay—For strategy 2, calculate the

EWMA values and plot them on the I chart. Calculate the

the root cause(s). Discard the data set and start the procedure

again. EWMA control limits and overlay them on the I chart.

D6299 − 23a

STAGE 2—Deployment for Monitoring and Periodic where:

Re-assessment

Ī = the current I-chart center line, which is the arith-

current

8.4.5 Control Chart Deployment—Put these control charts metic average calculated using all in control results

into operation by regularly plotting the test results (pretreated without the new data in 8.6.2; n is the number of

results used to calculate Ī , and

if appropriate) on the charts and immediately interpreting the

current

x¯ = the arithmetic average of new results in 8.6.2; n is

charts.

newdata 2

the number of results used to calculate x¯ .

newdata

8.5 Control Chart Interpretation:

As a safeguard against slow drift in one direction that is below

8.5.1 Apply control chart rules (see A1.5) to determine if

the detection power of the control chart rules, four consecutive

the data supports the hypothesis that the measurement system

adjustment of the I-chart center line in the same direction shall

is under the influence of common causes variation only (in

trigger an accuracy verification by Check Standard (CS).

statistical control).

Follow Practice D6617 to determine the acceptable tolerance

8.5.2 Investigate Out-of-Control Points in Detail—Exclude

zone for the difference between the result obtained versus the

from further data analysis those associated with assignable

Accepted Reference Value (ARV) of the CS.

causes, provided the assignable causes are deemed not to be

NOTE 23—Sigma can be either pooled or un-pooled, depending on

part of the normal process.

whether it was performed in 8.6.2.1.

NOTE 21—All data, regardless of in-control or out-of-control status, 8.6.3 If the outcome of the F-test is significant, investigate

needs to be recorded.

for assignable causes. Update the current control limits based

on sample variance and average calculated using the new data

8.6 Scenario 1 for Periodic Updating of Control Charts

if it is determined that this new variance and average is

Parameters:

representative of current system performance under common

8.6.1 Scenario 1 covers (1) control charts for a QC material

cause variation.

where there had been no change in the system, but more data

of the same level has been accrued; or (2) control charts for

8.7 Scenario 2 for Periodic Updating of Control Charts

check standard pretreated results.

Parameters:

8.6.2 When a minimum of 20 new in-control data points

8.7.1 Scenario 2 covers control chart for QC materials

becomes available, perform an F-test (see A1.8) of sample

where an assignable cause change in the system had occurred

variances for the new data set versus the sample variance used

due to a change of QC material as the current QC material

to calculate the current control chart limits. If the outcome of

supply is exhausted. Minor or major differences in measured

the F-test is not significant, and, if the sample variance used to

property level may exist between QC material batches. Since

calculate the current control limits is based on less than 100

control limit calculations for the I chart require a center value

data points, statistically pool both sample variances and then

established by the measurement system, a special transition

update the current control limits based on this new pooled

procedure is required to ensure that the center value for a new

variance and I-chart center line (Ī in equations Eq

batch of QC material is established using results produced by

A1.10-A1.13) if updated (see 8.6.2.2).

a measurement system that is in statistical control. This

8.6.2.1 If the outcome of the F-test is not significant, and if

practice presents two procedures to be selected at the users’

the sample variance used to calculate the current control limits

discretion.

is based on more than 100 data points, the statistical pooling of

8.7.1.1 Use of Precision Statistics from Previous Control

both sample variances to be used for update of the current

Charts—Control chart statistics achieved (Ī , σ ,

achieved achieved

control limits is recommended, but may be at the discretion of

¯

MR ) from previous completed I, MR chart for similar QC

the user. achieved

material may be used for the new QC batch transition tech-

8.6.2.2 If the outcome of the F-test is not significant,

niques described in this section if either of the following

compute the t value in Eq 3 using the average of the new

conditions is met:

in-control data, the current center line of the I-chart, and the

(1) test method published reproducibility (R ) is not

pub

current chart standard deviation (σ ) used to compute the

R’

dependent on the measurement level

I-chart control limits. Re-compute and update the I-chart center

(2) for R expressed as a function of the measurement

line to reduce its statistical uncertainty is permissible if all of pub

level, the ratio:

the following conditions are met:

[R / R ] is between 0.85 and

(1) |t| ≤ 1.7 pub@Ī_achieved pub@ 1st new QC result

1.15.

(2) ewma on one side of center line < 75 %

newdata

where:

NOTE 22—The value 1.7 is based on a one-sided t-test of a “difference

= 0” null hypothesis versus an alternate hypothesis of either greater than

R = published method reproducibility

pub@Ī_achieved

or less than zero as chosen by the user at 5 % significance level, 40 to 250

evaluated at Ī level, and

achieved

degrees of freedom rounded up to 1st decimal for simplicity.

R = published method reproducibility

pub@1st new QC result

¯ evaluated at the 1st new QC result

~I 2 x¯ !

current newdata

t 5 (3)

level.

1 1

σ 1

Œ

R'

n n 8.7.2 Procedure 1, Concurrent Testing:

1 2

D6299 − 23a

8.7.2.1 Collect and prepare a new batch of QC material σ meeting the requirements in 8.7.1.1 can be used as

achieved

when the current QC material supply remaining can support no σ for this purpose. A Q statistic is computed with the

known r

more than 20 analyses. arrival of each new QC result commensurate with the 2nd

8.7.2.2 Concurrently test and record data for the new result, and compared against its theoretical mean (0) and 3

sigma limits (6 3). See

...

This document is not an ASTM standard and is intended only to provide the user of an ASTM standard an indication of what changes have been made to the previous version. Because

it may not be technically possible to adequately depict all changes accurately, ASTM recommends that users consult prior editions as appropriate. In all cases only the current version

of the standard as published by ASTM is to be considered the official document.

´1

Designation: D6299 − 23 D6299 − 23a An American National Standard

Standard Practice for

Applying Statistical Quality Assurance and Control Charting

Techniques to Evaluate Analytical Measurement System

Performance

This standard is issued under the fixed designation D6299; the number immediately following the designation indicates the year of

original adoption or, in the case of revision, the year of last revision. A number in parentheses indicates the year of last reapproval. A

superscript epsilon (´) indicates an editorial change since the last revision or reapproval.

ε NOTE—Editorially corrected an equation in A1.11.2 in September 2023.

1. Scope*

1.1 This practice covers information for the design and operation of a program to monitor and control ongoing stability and

precision and bias performance of selected analytical measurement systems using a collection of generally accepted statistical

quality control (SQC) procedures and tools.

NOTE 1—A complete list of criteria for selecting measurement systems to which this practice should be applied and for determining the frequency at which

it should be applied is beyond the scope of this practice. However, some factors to be considered include (1) frequency of use of the analytical

measurement system, (2) criticality of the parameter being measured, (3) system stability and precision performance based on historical data, (4) business

economics, and (5) regulatory, contractual, or test method requirements.

1.2 This practice is applicable to stable analytical measurement systems that produce results on a continuous numerical scale.

1.3 This practice is applicable to laboratory test methods.

1.4 This practice is applicable to validated process stream analyzers.

1.5 This practice is applicable to monitoring the differences between two analytical measurement systems that purport to measure

the same property provided that both systems have been assessed in accordance with the statistical methodology in Practice D6708

and the appropriate bias applied.

NOTE 2—For validation of univariate process stream analyzers, see also Practice D3764.

NOTE 3—One or both of the analytical systems in 1.5 may be laboratory test methods or validated process stream analyzers.

1.6 This practice assumes that the normal (Gaussian) model is adequate for the description and prediction of measurement system

behavior when it is in a state of statistical control.

This practice is under the jurisdiction of ASTM Committee D02 on Petroleum Products, Liquid Fuels, and Lubricants and is the direct responsibility of Subcommittee

D02.94 on Coordinating Subcommittee on Quality Assurance and Statistics.

Current edition approved July 1, 2023Dec. 1, 2023. Published September 2023December 2023. Originally approved in 1998. Last previous edition approved in 20222023

ɛ1

as D6299 – 22D6299 – 23 . DOI: 10.1520/D6299-23E01.10.1520/D6299-23A.

*A Summary of Changes section appears at the end of this standard

Copyright © ASTM International, 100 Barr Harbor Drive, PO Box C700, West Conshohocken, PA 19428-2959. United States

D6299 − 23a

NOTE 4—For non-Gaussian processes, transformations of test results may permit proper application of these tools. Consult a statistician for further

guidance and information.

1.7 This international standard was developed in accordance with internationally recognized principles on standardization

established in the Decision on Principles for the Development of International Standards, Guides and Recommendations issued

by the World Trade Organization Technical Barriers to Trade (TBT) Committee.

2. Referenced Documents

2.1 ASTM Standards:

D3764 Practice for Validation of the Performance of Process Stream Analyzer Systems

D4175 Terminology Relating to Petroleum Products, Liquid Fuels, and Lubricants

D5191 Test Method for Vapor Pressure of Petroleum Products and Liquid Fuels (Mini Method)

D6300 Practice for Determination of Precision and Bias Data for Use in Test Methods for Petroleum Products, Liquid Fuels, and

Lubricants

D6617 Practice for Laboratory Bias Detection Using Single Test Result from Standard Material

D6708 Practice for Statistical Assessment and Improvement of Expected Agreement Between Two Test Methods that Purport

to Measure the Same Property of a Material

D6792 Practice for Quality Management Systems in Petroleum Products, Liquid Fuels, and Lubricants Testing Laboratories

D7372 Guide for Analysis and Interpretation of Proficiency Test Program Results

D7915 Practice for Application of Generalized Extreme Studentized Deviate (GESD) Technique to Simultaneously Identify

Multiple Outliers in a Data Set

E177 Practice for Use of the Terms Precision and Bias in ASTM Test Methods

E178 Practice for Dealing With Outlying Observations

E456 Terminology Relating to Quality and Statistics

E691 Practice for Conducting an Interlaboratory Study to Determine the Precision of a Test Method

3. Terminology

3.1 Definitions:

3.1.1 More extensive lists of terms related to quality and statistics are found in Terminology D4175, Practice D6300, and

Terminology E456.

3.1.2 repeatability conditions, n—conditions where independent test results are obtained with the same method on identical test

items in the same laboratory by the same operator using the same equipment within short intervals of time. D6300

3.1.3 reproducibility (R), n—a quantitative expression for the random error associated with the difference between two

independent results obtained under reproducibility conditions that would be exceeded with an approximate probability of 5 % (one

case in 20 in the long run) in the normal and correct operation of the test method. D6300

3.1.4 reproducibility conditions, n—conditions where independent test results are obtained with the same method on identical test

items in different laboratories with different operators using different equipment.

3.1.4.1 Discussion—

Different laboratory by necessity means a different operator, different equipment, and different location and under different

supervisory control. D6300

3.2 Definitions of Terms Specific to This Standard:

3.2.1 More extensive lists of terms related to quality and statistics are found in Terminology D4175, Practice D6300, and

Terminology E456.

3.2.2 accepted reference value, n—a value that serves as an agreed-upon reference for comparison and that is derived as (1) a

theoretical or established value, based on scientific principles, (2) an assigned value, based on experimental work of some national

or international organization, such as the U.S. National Institute of Standards and Technology (NIST), or (3) a consensus value,

based on collaborative experimental work under the auspices of a scientific or engineering group.

For referenced ASTM standards, visit the ASTM website, www.astm.org, or contact ASTM Customer Service at service@astm.org. For Annual Book of ASTM Standards

volume information, refer to the standard’s Document Summary page on the ASTM website.

D6299 − 23a

3.2.3 accuracy, n—the closeness of agreement between an observed value and an accepted reference value.

3.2.4 analytical measurement system, n—a collection of one or more components or subsystems, such as samplers, test equipment,

instrumentation, display devices, data handlers, printouts or output transmitters, that is used to determine a quantitative value of

a specific property for an unknown sample in accordance with a test method.

3.2.4.1 Discussion—

A standard test method (for example, ASTM, ISO) executed at a single site using a specific instrument may be is an example of

an analytical measurement system.

3.2.4.2 Discussion—

The control chart methodology and work processes described in this practice are intended to be applied independently to the final

results produced from each individual measurement system, or, differences between results from two individual measurement

systems for the same test sample. They are not intended to be applied to combined final results from multiple individual analytical

systems or different instruments executing the same test method.

3.2.5 assignable cause, n—a factor that contributes to variation and that is feasible to detect and identify.

3.2.6 bias, n—a systematic error that contributes to the difference between a population mean of the measurements or test results

and an accepted reference or true value.

3.2.7 blind submission, n—submission of a check standard or quality control (QC) sample for analysis without revealing the

expected value to the person performing the analysis.

3.2.8 check standard, n—in QC testing, a material having an accepted reference value used to determine the accuracy of a

measurement system.

3.2.8.1 Discussion—

A check standard is preferably a material that is either a certified reference material with traceability to a nationally recognized

body or a material that has an accepted reference value established through interlaboratory testing. For some measurement systems,

a pure, single component material having known value or a simple gravimetric or volumetric mixture of pure components having

calculable value may serve as a check standard. Users should be aware that for measurement systems that show matrix

dependencies, accuracy determined from pure compounds or simple mixtures may not be representative of that achieved on actual

samples.

3.2.9 common (chance, random) cause, n—for quality assurance programs, one of generally numerous factors, individually of

relatively small importance, that contributes to variation, and that is not feasible to detect and identify.

3.2.10 control limits, n—limits on a control chart that are used as criteria for signaling the need for action or for judging whether

a set of data does or does not indicate a state of statistical control.

3.2.11 double blind submission, n—submission of a check standard or QC sample for analysis without revealing the check

standard or QC sample status and expected value to the person performing the analysis.

3.2.12 in-statistical-control, adj—a process, analytical measurement system, or function that exhibits variations that can only be

attributable to common cause.

3.2.13 lot, n—a definite quantity of a product or material accumulated under conditions that are considered uniform for sampling

purposes.

3.2.14 out-of-statistical-control, adj—a process, analytical measurement system, or function that exhibits variations in addition to

those that can be attributable to common cause and the magnitude of these additional variations exceed specified limits.

3.2.14.1 Discussion—

For clarification, a transition from an in-statistical-control system to an out-of-statistical-control system does not automatically

imply that there is a change in the fit for use status of the system in terms of meeting the requirements for the intended application.

3.2.15 precision, n—the closeness of agreement between test results obtained under prescribed conditions.

D6299 − 23a

3.2.16 proficiency testing, n—determination of a laboratory’s testing capability by participation in an interlaboratory crosscheck

program.

3.2.16.1 Discussion—

ASTM Committee D02 conducts proficiency testing among hundreds of laboratories, using a wide variety of petroleum products

and lubricants.

3.2.17 quality control (QC) sample, n—for use in quality assurance programs to determine and monitor the precision and stability

of a measurement system, a stable and homogeneous material having physical or chemical properties, or both, similar to those of

typical samples tested by the analytical measurement system; the material is properly stored to ensure sample integrity, and is

available in sufficient quantity for repeated, long term testing.

3.2.18 system expected value (SEV), n—for a QC sample this is an estimate of the theoretical limiting value towards which the