ISO/IEC 23090-2:2019

(Main)Information technology — Coded representation of immersive media — Part 2: Omnidirectional media format

Information technology — Coded representation of immersive media — Part 2: Omnidirectional media format

This document specifies the omnidirectional media format for coding, storage, delivery, and rendering of omnidirectional media, including video, images, audio, and timed text. In an OMAF player the user's viewing perspective is from the centre of the sphere looking outward towards the inside surface of the sphere. NOTE 1 In this document, only 3 degrees of freedom (3DOF) is supported. In other words, purely translational movement of the user does not result in different omnidirectional media being rendered to the user. For 3DOF support with stereoscopic video, when the user rolls his/her head, there could be a stereoscopic rendering issue. NOTE 2 Omnidirectional video could contain graphics elements generated by computer graphics but encoded as video.

Technologies de l'information — Représentation codée de média immersifs — Partie 2: Format de média omnidirectionnel

General Information

- Status

- Withdrawn

- Publication Date

- 13-Jan-2019

- Withdrawal Date

- 13-Jan-2019

- Current Stage

- 9599 - Withdrawal of International Standard

- Start Date

- 07-Jul-2021

- Completion Date

- 14-Feb-2026

Relations

- Effective Date

- 23-Apr-2020

Buy Documents

ISO/IEC 23090-2:2019 - Information technology — Coded representation of immersive media — Part 2: Omnidirectional media format Released:1/14/2019

ISO/IEC 23090-2:2019 - Information technology -- Coded representation of immersive media

Get Certified

Connect with accredited certification bodies for this standard

BSI Group

BSI (British Standards Institution) is the business standards company that helps organizations make excellence a habit.

NYCE

Mexican standards and certification body.

Sponsored listings

Frequently Asked Questions

ISO/IEC 23090-2:2019 is a standard published by the International Organization for Standardization (ISO). Its full title is "Information technology — Coded representation of immersive media — Part 2: Omnidirectional media format". This standard covers: This document specifies the omnidirectional media format for coding, storage, delivery, and rendering of omnidirectional media, including video, images, audio, and timed text. In an OMAF player the user's viewing perspective is from the centre of the sphere looking outward towards the inside surface of the sphere. NOTE 1 In this document, only 3 degrees of freedom (3DOF) is supported. In other words, purely translational movement of the user does not result in different omnidirectional media being rendered to the user. For 3DOF support with stereoscopic video, when the user rolls his/her head, there could be a stereoscopic rendering issue. NOTE 2 Omnidirectional video could contain graphics elements generated by computer graphics but encoded as video.

This document specifies the omnidirectional media format for coding, storage, delivery, and rendering of omnidirectional media, including video, images, audio, and timed text. In an OMAF player the user's viewing perspective is from the centre of the sphere looking outward towards the inside surface of the sphere. NOTE 1 In this document, only 3 degrees of freedom (3DOF) is supported. In other words, purely translational movement of the user does not result in different omnidirectional media being rendered to the user. For 3DOF support with stereoscopic video, when the user rolls his/her head, there could be a stereoscopic rendering issue. NOTE 2 Omnidirectional video could contain graphics elements generated by computer graphics but encoded as video.

ISO/IEC 23090-2:2019 is classified under the following ICS (International Classification for Standards) categories: 35.040.40 - Coding of audio, video, multimedia and hypermedia information. The ICS classification helps identify the subject area and facilitates finding related standards.

ISO/IEC 23090-2:2019 has the following relationships with other standards: It is inter standard links to ISO/IEC 23090-2:2021. Understanding these relationships helps ensure you are using the most current and applicable version of the standard.

ISO/IEC 23090-2:2019 is available in PDF format for immediate download after purchase. The document can be added to your cart and obtained through the secure checkout process. Digital delivery ensures instant access to the complete standard document.

Standards Content (Sample)

INTERNATIONAL ISO/IEC

STANDARD 23090-2

First edition

2019-01

Information technology — Coded

representation of immersive media —

Part 2:

Omnidirectional media format

Technologies de l'information — Représentation codée de média

immersifs — Partie 2: Format de média omnidirectionnel

Reference number

©

ISO/IEC 2019

© ISO/IEC 2019

All rights reserved. Unless otherwise specified, or required in the context of its implementation, no part of this publication may

be reproduced or utilized otherwise in any form or by any means, electronic or mechanical, including photocopying, or posting

on the internet or an intranet, without prior written permission. Permission can be requested from either ISO at the address

below or ISO’s member body in the country of the requester.

ISO copyright office

CP 401 • Ch. de Blandonnet 8

CH-1214 Vernier, Geneva

Phone: +41 22 749 01 11

Fax: +41 22 749 09 47

Email: copyright@iso.org

Website: www.iso.org

Published in Switzerland

ii © ISO/IEC 2019 – All rights reserved

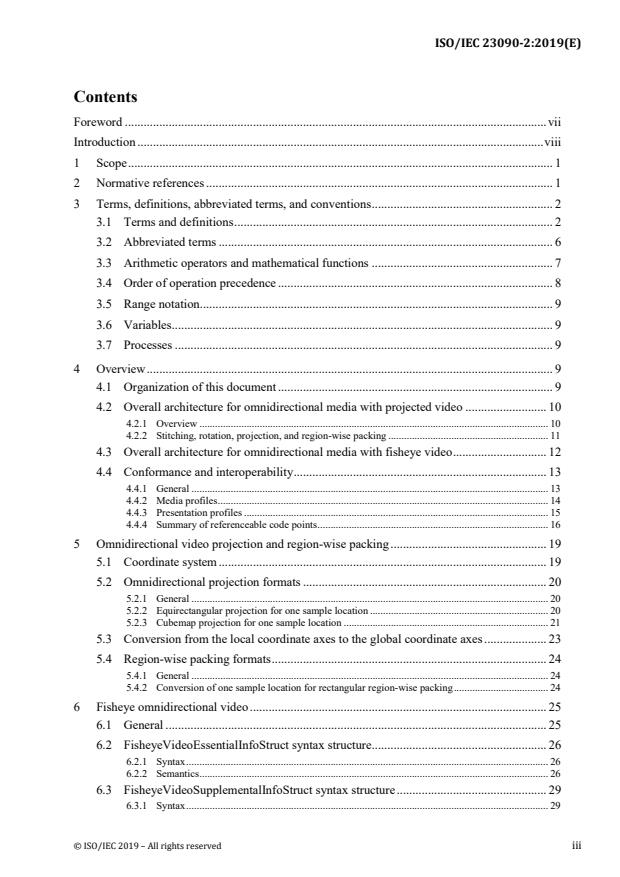

Contents

Foreword . vii

Introduction . viii

1 Scope . 1

2 Normative references . 1

3 Terms, definitions, abbreviated terms, and conventions . 2

3.1 Terms and definitions . 2

3.2 Abbreviated terms . 6

3.3 Arithmetic operators and mathematical functions . 7

3.4 Order of operation precedence . 8

3.5 Range notation . 9

3.6 Variables . 9

3.7 Processes . 9

4 Overview . 9

4.1 Organization of this document . 9

4.2 Overall architecture for omnidirectional media with projected video . 10

4.2.1 Overview . 10

4.2.2 Stitching, rotation, projection, and region-wise packing . 11

4.3 Overall architecture for omnidirectional media with fisheye video . 12

4.4 Conformance and interoperability . 13

4.4.1 General . 13

4.4.2 Media profiles . 14

4.4.3 Presentation profiles . 15

4.4.4 Summary of referenceable code points . 16

5 Omnidirectional video projection and region-wise packing . 19

5.1 Coordinate system . 19

5.2 Omnidirectional projection formats . 20

5.2.1 General . 20

5.2.2 Equirectangular projection for one sample location . 20

5.2.3 Cubemap projection for one sample location . 21

5.3 Conversion from the local coordinate axes to the global coordinate axes . 23

5.4 Region-wise packing formats . 24

5.4.1 General . 24

5.4.2 Conversion of one sample location for rectangular region-wise packing . 24

6 Fisheye omnidirectional video . 25

6.1 General . 25

6.2 FisheyeVideoEssentialInfoStruct syntax structure . 26

6.2.1 Syntax . 26

6.2.2 Semantics . 26

6.3 FisheyeVideoSupplementalInfoStruct syntax structure . 29

6.3.1 Syntax . 29

© ISO/IEC 2019 – All rights reserved iii

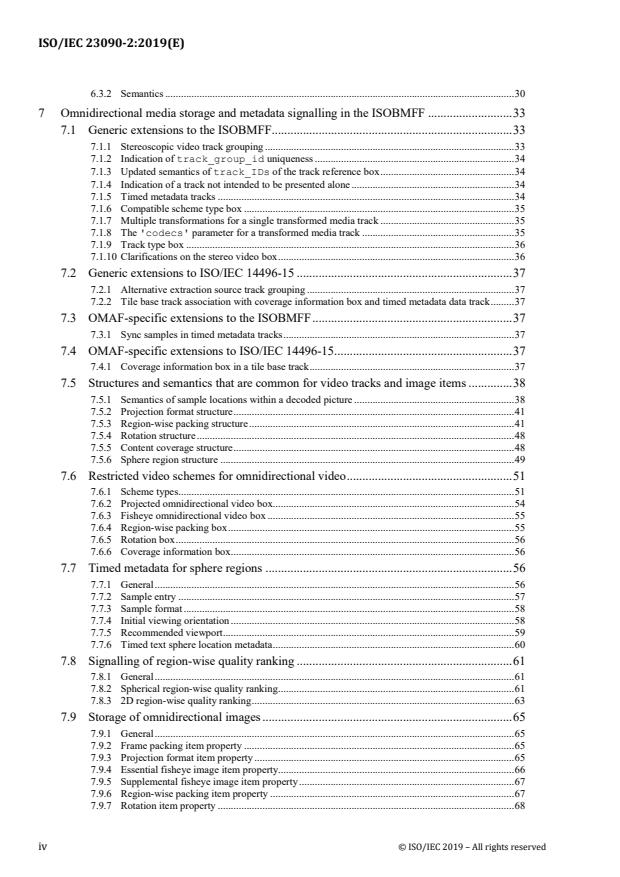

6.3.2 Semantics . 30

7 Omnidirectional media storage and metadata signalling in the ISOBMFF . 33

7.1 Generic extensions to the ISOBMFF . 33

7.1.1 Stereoscopic video track grouping . 33

7.1.2 Indication of track_group_id uniqueness . 34

7.1.3 Updated semantics of track_IDs of the track reference box . 34

7.1.4 Indication of a track not intended to be presented alone . 34

7.1.5 Timed metadata tracks . 34

7.1.6 Compatible scheme type box . 35

7.1.7 Multiple transformations for a single transformed media track . 35

7.1.8 The 'codecs' parameter for a transformed media track . 35

7.1.9 Track type box . 36

7.1.10 Clarifications on the stereo video box . 36

7.2 Generic extensions to ISO/IEC 14496-15 . 37

7.2.1 Alternative extraction source track grouping . 37

7.2.2 Tile base track association with coverage information box and timed metadata data track . 37

7.3 OMAF-specific extensions to the ISOBMFF . 37

7.3.1 Sync samples in timed metadata tracks . 37

7.4 OMAF-specific extensions to ISO/IEC 14496-15 . 37

7.4.1 Coverage information box in a tile base track . 37

7.5 Structures and semantics that are common for video tracks and image items . 38

7.5.1 Semantics of sample locations within a decoded picture . 38

7.5.2 Projection format structure . 41

7.5.3 Region-wise packing structure . 41

7.5.4 Rotation structure . 48

7.5.5 Content coverage structure . 48

7.5.6 Sphere region structure . 49

7.6 Restricted video schemes for omnidirectional video . 51

7.6.1 Scheme types. 51

7.6.2 Projected omnidirectional video box . 54

7.6.3 Fisheye omnidirectional video box . 55

7.6.4 Region-wise packing box . 55

7.6.5 Rotation box . 56

7.6.6 Coverage information box . 56

7.7 Timed metadata for sphere regions . 56

7.7.1 General . 56

7.7.2 Sample entry . 57

7.7.3 Sample format . 58

7.7.4 Initial viewing orientation . 58

7.7.5 Recommended viewport . 59

7.7.6 Timed text sphere location metadata . 60

7.8 Signalling of region-wise quality ranking . 61

7.8.1 General . 61

7.8.2 Spherical region-wise quality ranking . 61

7.8.3 2D region-wise quality ranking . 63

7.9 Storage of omnidirectional images . 65

7.9.1 General . 65

7.9.2 Frame packing item property . 65

7.9.3 Projection format item property . 65

7.9.4 Essential fisheye image item property. 66

7.9.5 Supplemental fisheye image item property . 67

7.9.6 Region-wise packing item property . 67

7.9.7 Rotation item property . 68

iv © ISO/IEC 2019 – All rights reserved

7.9.8 Coverage information item property. 68

7.9.9 Initial viewing orientation item property . 69

7.10 Storage of timed text for omnidirectional video . 69

7.10.1 General . 69

7.10.2 OMAF timed text configuration box . 70

7.10.3 IMSC1 tracks . 72

7.10.4 WebVTT tracks . 73

8 Omnidirectional media encapsulation and signalling in DASH . 73

8.1 Architecture of DASH delivery in OMAF . 73

8.2 Usage of DASH in OMAF . 74

8.2.1 General . 74

8.2.2 Signalling of stereoscopic frame packing . 74

8.2.3 Carriage of timed metadata . 74

8.3 DASH MPD descriptors for omnidirectional media . 75

8.3.1 XML namespace and schema . 75

8.3.2 Signalling of projection type information . 75

8.3.3 Signalling of region-wise packing type . 76

8.3.4 Signalling of content coverage . 76

8.3.5 Signalling of spherical region-wise quality ranking . 79

8.3.6 Signalling of 2D region-wise quality ranking . 84

8.3.7 Signalling of fisheye omnidirectional video . 88

9 Omnidirectional media encapsulation and signalling in MMT . 89

9.1 Architecture of MMT delivery in OMAF . 89

9.2 OMAF signalling in MPEG composition information . 90

9.3 VR application-specific MMT signalling . 90

9.3.1 General . 90

9.3.2 MMT signalling . 91

10 Media profiles . 103

10.1 Video profiles . 103

10.1.1 Overview . 103

10.1.2 HEVC-based viewport-independent OMAF video profile . 103

10.1.3 HEVC-based viewport-dependent OMAF video profile . 106

10.1.4 AVC-based viewport-dependent OMAF video profile . 109

10.2 Audio profiles . 111

10.2.1 Overview . 111

10.2.2 OMAF 3D audio baseline profile . 111

10.2.3 OMAF 2D audio legacy profile . 114

10.3 Image profiles . 118

10.3.1 Overview . 118

10.3.2 Common specifications for image profiles . 119

10.3.3 OMAF HEVC image profile . 120

10.3.4 OMAF legacy image profile . 121

10.4 Timed text profiles . 122

10.4.1 Overview . 122

10.4.2 OMAF IMSC1 timed text profile . 123

10.4.3 OMAF WebVTT timed text profile . 123

11 Presentation profiles . 124

11.1 OMAF viewport-independent baseline presentation profile . 124

11.1.1 General (informative) . 124

© ISO/IEC 2019 – All rights reserved v

11.1.2 ISO base media file format constraints . 124

11.2 OMAF viewport-dependent baseline presentation profile . 124

11.2.1 General . 124

11.2.2 ISO base media file format constraints . 124

Annex A (normative) OMAF DASH schema . 125

Annex B (normative) DASH integration of media profiles . 128

Annex C (normative) CMAF integration of media profiles . 134

Annex D (informative) Viewport-dependent omnidirectional video processing . 136

Annex E (informative) DASH MPD examples . 154

Annex F (informative) MMT signalling examples . 158

vi © ISO/IEC 2019 – All rights reserved

Foreword

ISO (the International Organization for Standardization) and IEC (the International Electrotechnical Commission) form

the specialized system for worldwide standardization. National bodies that are members of ISO or IEC participate in the

development of International Standards through technical committees established by the respective organization to deal

with particular fields of technical activity. ISO and IEC technical committees collaborate in fields of mutual interest. Other

international organizations, governmental and non-governmental, in liaison with ISO and IEC, also take part in the work.

In the field of information technology, ISO and IEC have established a joint technical committee, ISO/IEC JTC 1.

The procedures used to develop this document and those intended for its further maintenance are described in the ISO/IEC

Directives, Part 1. In particular the different approval criteria needed for the different types of document should be noted.

This document was drafted in accordance with the editorial rules of the ISO/IEC Directives, Part 2

(see www.iso.org/directives).

Attention is drawn to the possibility that some of the elements of this document may be the subject of patent rights. ISO

and IEC shall not be held responsible for identifying any or all such patent rights. Details of any patent rights identified

during the development of the document will be in the Introduction and/or on the ISO list of patent declarations received

(see www.iso.org/patents).

Any trade name used in this document is information given for the convenience of users and does not constitute an

endorsement.

For an explanation of the voluntary nature of standards, the meaning of ISO specific terms and expressions related to

conformity assessment, as well as information about ISO's adherence to the World Trade Organization (WTO) principles

in the Technical Barriers to Trade (TBT) see: www.iso.org/iso/foreword.html.

This document was prepared by Technical Committee ISO/IEC JTC 1, Information technology, Subcommittee SC 29,

Coding of audio, picture, multimedia and hypermedia information.

A list of all parts in the ISO/IEC 23090 series can be found on the ISO website.

Any feedback or questions on this document should be directed to the user’s national standards body. A complete listing

of these bodies can be found at www.iso.org/members.html.

© ISO/IEC 2019 – All rights reserved vii

Introduction

When omnidirectional media content is consumed with a head-mounted display and headphones, only the parts of the

media that correspond to the user's viewing orientation are rendered, as if the user were in the spot where and when the

media was captured. One of the most popular forms of omnidirectional media applications is omnidirectional video, also

known as 360° video. Omnidirectional video is typically captured by multiple cameras that cover up to 360° of the scene.

Compared to traditional media application formats, the end-to-end technology for omnidirectional video (from capture to

playback) is more easily fragmented due to various capturing and video projection technologies. From the capture side,

there exist many different types of cameras capable of capturing 360° video, and on the playback side there are many

different devices that are able to playback 360° video with different processing capabilities. To avoid fragmentation of

omnidirectional media content and devices, a standardized format for omnidirectional media applications is specified in

this document.

This document defines a media format that enables omnidirectional media applications, focusing on 360° video, images,

and audio, as well as associated timed text. What is specified in this document includes (but is not limited to):

1) a coordinate system that consists of a unit sphere and three coordinate axes, namely the X (back-to-front) axis, the Y

(lateral, side-to-side) axis, and the Z (vertical, up) axis,

2) projection and rectangular region-wise packing methods that may be used for conversion of a spherical video sequence

or image into a two-dimensional rectangular video sequence or image, respectively,

3) storage of omnidirectional media and the associated metadata using the ISO base media file format (ISOBMFF) as

specified in ISO/IEC 14496-12,

4) encapsulation, signalling, and streaming of omnidirectional media in a media streaming system, e.g., dynamic

adaptive streaming over HTTP (DASH) as specified in ISO/IEC 23009-1 or MPEG media transport (MMT) as

specified in ISO/IEC 23008-1, and

5) media profiles and presentation profiles that provide interoperable and conformance points for media codecs as well

as media coding and encapsulation configurations that may be used for compression, streaming, and playback of the

omnidirectional media content.

viii © ISO/IEC 2019 – All rights reserved

INTERNATIONAL STANDARD ISO/IEC 23090-2:2019(E)

Information technology — Coded representation of immersive

media —

Part2:

Omnidirectional media format

1 Scope

This document specifies the omnidirectional media format for coding, storage, delivery, and rendering of omnidirectional

media, including video, images, audio, and timed text.

In an OMAF player the user's viewing perspective is from the centre of the sphere looking outward towards the inside

surface of the sphere.

NOTE 1 In this document, only 3 degrees of freedom (3DOF) is supported. In other words, purely translational movement of the user

does not result in different omnidirectional media being rendered to the user. For 3DOF support with stereoscopic video, when the user

rolls his/her head, there could be a stereoscopic rendering issue.

NOTE 2 Omnidirectional video could contain graphics elements generated by computer graphics but encoded as video.

2 Normative references

The following documents are referred to in the text in such a way that some or all of their content constitutes requirements

of this document. For dated references, only the edition cited applies. For undated references, the latest edition of the

referenced document (including any amendments) applies.

ISO/IEC 10918-1, Information technology — Digital compression and coding of continuous-tone still images — Part 1:

Requirements and guidelines

ISO/IEC 14496-1, Infomation technology — Coding of audio-visual objects — Part 1: Systems

ISO/IEC 14496-3:2009, Information technology — Coding of audio-visual objects — Part 3: Audio

ISO/IEC 14496-10:2014, Information technology — Coding of audio-visual objects — Part 10: Advanced video coding

ISO/IEC 14496-12, Information technology — Coding of audio-visual objects — Part 12: ISO base media file format

ISO/IEC 14496-14, Information technology — Coding of audio-visual objects — Part 14, MP4 file format

ISO/IEC 14496-15:2017, Information technology — Coding of audio-visual objects — Part 15, Carriage of network

abstraction layer (NAL) unit structured video in the ISO base media file format

ISO/IEC 14496-30:2018, Information technology — Coding of audio-visual objects — Part 30: Timed text and other

visual overlays in ISO base media file format

ISO/IEC 23000-19:2018, Information technology — Multimedia application format (MPEG-A) — Part 19: Common

media application format (CMAF) for segmented media

ISO/IEC 23003-4:2015, Information technology — MPEG audio technologies — Part 4: Dynamic range control

ISO/IEC 23008-1:2017, Information technology — High efficiency coding and media delivery in heterogeneous

environments — Part 1: MPEG media transport (MMT)

ISO/IEC 23008-2:2017, Information technology — High efficiency coding and media delivery in heterogeneous

environments — Part 2: High efficiency video coding

ISO/IEC 23008-3:2015, Information technology — High efficiency coding and media delivery in heterogeneous

environments — Part 3: 3D audio

© ISO/IEC 2019 – All rights reserved 1

ISO/IEC 23008-12, Information technology — High efficiency coding and media delivery in heterogeneous

environments — Part 12: Image file format

ISO/IEC 23009-1, Information technology — Dynamic adaptive streaming over HTTP (DASH) — Part 1: Media

presentation description and segment formats

ISO/IEC 23091-2 , Information technology — Coding-independent code points — Part 2: Video

ISO/IEC 23091-3, Information technology — Coding-independent code points — Part 3: Audio

W3C Recommendation, TTML profiles for Internet media subtitles and captions 1.0 (IMSC1)

WebVTT: The web video text tracks format, W3C (Working Draft, 08 August 2017)

W3C Recommendation, XML schema part 1: Structures

W3C Recommendation, XML schema part 2: Datatypes

IETF BCP 47, Tags for Identifying Languages

IETF RFC 6381, MIME Codecs and Profiles

3 Terms, definitions, abbreviated terms, and conventions

3.1 Terms and definitions

For the purposes of this document, the terms and definitions in ISO/IEC 14496-12, ISO/IEC 23008-12, ISO/IEC 23009-1

and the following apply. If terms defined in ISO/IEC 14496-12, ISO/IEC 23008-12 and ISO/IEC 23009-1 are also defined

in this document, the definitions in this document are applicable.

NOTE In particular, the terms coded image, coded image item, derived image, derived image item, image item, reconstructed image,

and source image item are defined in ISO/IEC 23008-12.

The terminological databases for use in standardization maintained by ISO and IEC at the following addresses:

− IEC Electropedia: available at http://www.electropedia.org/

− ISO Online browsing platform: available at http://www.iso.org/obp

3.1.1

azimuth

first of the two sphere coordinates describing the location of a point on the sphere

Note 1 to entry: Azimuth and elevation are specified in subclause 5.1.

3.1.2

azimuth circle

circle on the sphere connecting all points with the same azimuth value

Note 1 to entry: An azimuth circle is always a great circle (3.1.17).

3.1.3

circular image

image captured with a fisheye lens (3.1.14)

3.1.4

closed scheme type

scheme type (3.1.35) that clearly specifies which transformations are allowed and does not allow future extensions

Under preparation. Stage at time of FDIS ballot: ISO/IEC DIS 23091-2, 40.99.

2 © ISO/IEC 2019 – All rights reserved

3.1.5

composition-aligned sample

sample in a track that is associated with another track, the sample has the same composition time as a particular sample in

the another track, or, when a sample with the same composition time is not available in the another track, the closest

preceding composition time relative to that of a particular sample in the another track

3.1.6

constituent picture

such part of a spatially frame-packed stereoscopic picture that corresponds to one view, or a picture itself when frame

packing is not in use or the temporal interleaving frame packing arrangement is in use

3.1.7

content coverage

one or more sphere regions that are covered by the content represented by the track or by an image item

3.1.8

elevation

second of the two sphere coordinates describing the location of a point on the sphere

Note 1 to entry: Azimuth and elevation are specified in subclause 5.1.

3.1.9

elevation circle

circle on the sphere connecting all points with the same elevation value

Note 1 to entry: When the elevation is zero, an elevation circle is also a great circle (3.1.17). This coincides with the equator on Earth.

3.1.10

extractor track

track that has untransformed sample entry type (3.1.44) equal to 'hvc2', 'avc2', or 'avc4' and contains one or more

'scal' track references

3.1.11

field of view

extent of the observable world in captured/recorded content or in a physical display device

3.1.12

file decoder

collective term for file/segment decapsulation and decoding of video, audio or image bitstreams

3.1.13

file decoding process

process specified as a part of a media profile specification that takes as input a set of ISOBMFF tracks or items and derives

either of the following:

− decoded pictures or audio samples, and rendering metadata for them;

− a fully rendered audio scene in the reference system

3.1.14

fisheye lens

wide-angle camera lens that usually captures an approximately hemispherical field of view (3.1.11) and projects it as a

circular image (3.1.3)

3.1.15

fisheye video

video captured by fisheye lenses (3.1.14)

3.1.16

global coordinate axes

coordinate axes that are associated with audio, video, and images representing the same acquisition position and intended

to be rendered together

Note 1 to entry: Coordinate axes are specified in subclause 5.1.

© ISO/IEC 2019 – All rights reserved 3

Note 2 to entry: The origin of the global coordinate axes is usually the same as the centre point of a device or rig used for omnidirectional

audio/video acquisition as well as the position of the observer's head in the three-dimensional space in which the audio and video tracks

are located.

Note 3 to entry: In the absence of the initial viewing orientation metadata (see subclause 7.7.4 for tracks or subclause 7.9.9 for image

items), the initial viewing orientation should be inferred to be equal to (0, 0, 0) for (centre_azimuth, centre_elevation,

centre_tilt) relative to the global coordinate axes.

3.1.17

great circle

intersection of the sphere and a plane that passes through the centre point of the sphere

Note 1 to entry: A great circle is also known as an orthodrome or Riemannian circle.

Note 2 to entry: The centre of the sphere and the centre of a great circle are co-located.

3.1.18

guard band

area in a packed picture (3.1.23) that is not rendered but may be used to improve the rendered part of the packed picture to

avoid or mitigate visual artifacts such as seams

Note to entry: Guard bands are associated with packed regions (3.1.24) as described in subclause 7.5.3.

3.1.19

local coordinate axes

coordinate axes obtained after applying rotation to the global coordinate axes (3.1.16)

3.1.20

OMAF player

collective term for

− file/segment reception or file access;

− file/segment decapsulation;

− decoding of audio, video, image, or timed text bitstreams;

− rendering of audio, images, or timed text; and

− viewport selection

3.1.21

omnidirectional media

media such as image or video and its associated audio that enable rendering according to the user's viewing orientation

(3.1.45), if consumed with a head-mounted device, or according to user's desired viewport (3.1.46), otherwise, as if the

user was in the spot where and when the media was captured

3.1.22

open-ended scheme type

scheme type (3.1.35) that allows future extensions

3.1.23

packed picture

picture that is represented as a coded picture in the coded video bitstream

Note 1 to entry: A packed picture may result from region-wise packing (3.1.31) of a projected picture (3.1.25).

3.1.24

packed region

region in a packed picture (3.1.24) that is mapped to a projected region (3.1.26) as specified by the region-wise packing

(3.1.31) signalling

3.1.25

projected picture

picture that has a representation format specified by an omnidirectional video projection format

Note 1 to entry: Omnidirectional projection formats are specified in subclause 5.2.

4 © ISO/IEC 2019 – All rights reserved

3.1.26

projected region

region in a projected picture (3.1.25) that is mapped to a packed region (3.1.24) as specified by the region-wise packing

signalling

3.1.27

projection

inverse of the process by which the samples of a projected picture (3.1.25) are mapped to a set of positions identified by a

set of azimuth and elevation coordinates on a unit sphere

3.1.28

quality ranking region

region that is associated with a quality ranking value and is specified relative to a decoded picture or a sphere

3.1.29

quality ranking 2D region

quality ranking region (3.1.28) that is specified relative to a decoded picture

3.1.30

quality ranking sphere region

quality ranking region (3.1.28) that is specified relative to a sphere

3.1.31

region-wise packing

inverse of the process of transformation, resizing, and relocating of packed regions (3.1.24) of a packed picture (3.1.23) to

remap to projected regions (3.1.26) of a projected picture (3.1.25)

3.1.32

rendering

process of generating audio-visual content for playback from the decoded audio-visual data according to the user's viewing

orientation (3.1.45), if consumed with a head-mounted device, or according to user's desired viewport (3.1.46), otherwise

3.1.33

sample

all the data associated with a single time or single element in one of the three sample arrays that represent a picture

Note 1 to entry: When the term sample is used in the context of a track, it refers to all the data associated with a single time of that track,

where a time is either a decoding time or a composition time. When the term sample is used in the context of a picture, e.g., in the phrase

"luma sample", it refers to a single element in one of the three sample arrays that represent the picture.

3.1.34

sample entry type

four-character code that is either the value of the format field of a SampleEntry directly contained in

SampleDescriptionBox or the data_format value of an instance of OriginalFormatBox

3.1.35

scheme type

type that parameterizes an encrypted, restricted, or incomplete media track

3.1.36

sphere coordinates

azimuth (ϕ) and elevation (θ) that identify a location of a point on the unit sphere

3.1.37

sphere region

region on a sphere, specified either by four great circles (3.1.17) or by two azimuth circles (3.1.2) and two elevation circles

(3.1.9), or such a region on the rotated sphere after applying certain amount of yaw, pitch, and roll rotations

3.1.38

sub-picture

picture that represents a spatial subset of the original video content, which has been split into spatial subsets before video

encoding at the content production side

© ISO/IEC 2019 – All rights reserved 5

3.1.39

sub-picture bitstream

bitstream that represents a spatial subset of the original video content, which has been split into spatial subsets before video

encoding at the content production side

3.1.40

sub-picture track

track that represents a sub-picture bitstream (3.1.39)

3.1.41

tilt angle

angle indicating the amount of tilt of a sphere region (3.1.37), measured as the amount of rotation of the sphere region

along the axis originating from the sphere origin passing through the centre point of the sphere region, where the angle

value increases clockwise when looking from the origin towards the positive end of the axis

3.1.42

time-parallel sample

sample in a track that is associated with another track, and either has the same decoding time as a particular sample in the

other track, or, when a sample with the same decoding time in the other track is not available, the closest preceding decoding

time relative to that of a particular sample in the other track

3.1.43

track sample entry type

sample entry type (3.1.34) of a SampleEntry directly contained in SampleDescriptionBox

3.1.44

untransformed sample entry type

track sample entry type (3.1.43) that would apply if no transformations had been performed to a transformed media track

3.1.45

viewing orientation

triplet of azimuth, elevation, and tilt angle characterizing the orientation that a user is consuming the audio-visual content;

in case of image or video, characterizing the orientation of the viewport (3.1.46)

3.1.46

viewport

region of omnidirectional image or video suitable for display and viewing by the user

3.2 Abbreviated terms

2D two-dimensional

CICP coding-independent code points (specified in ISO/IEC 23091-1, 23091-2, and 23091-3)

CMAF common media application format (specified in ISO/IEC 23000-19)

DASH MPEG dynamic adaptive streaming over HTTP (specified in ISO/IEC 23009-1)

ERP equirectangular projection

FOV field of view

ISOBMFF ISO base media file format (specified in ISO/IEC 14496-12)

HEVC high efficiency video coding (specified in ISO/IEC 23008-2)

HMD head-mounted display

HOA higher-order ambisonics

6 © ISO/IEC 2019 – All rights reserved

MCTS motion-constrained tile set

MMT MPEG media transport (specified in ISO/IEC 23008-1)

OMAF omnidirectional media format (specified in ISO/IEC 23090-2)

SRD spatial relationship description

URN uniform resource name

VR virtual reality

3.3 Arithmetic operators and mathematical functions

+ addition

− subtraction (as a two-argument operator) or negation (as a unary prefix operator)

* multiplication, including matrix multiplication

y

x exponentiation, specifies x to the power of y

(In other contexts, such notation is used for superscripting not intended for interpretation as exponentiation.)

/ integer division with truncation of the result toward zero

EXAMPLE 7 / 4 and −7 / −4 are truncated to 1 and −7 / 4 and 7 / −4 are truncated to −1.

÷ denotes division in mathematical equations where no truncation or rounding is intended

x

denotes division in mathematical equations where no truncation or rounding is intended

y

y

f (i)

summation of f( i ) with i taking all integer values from x up to and including y

∑

i=x

x % y modulus

(Remainder of x divided by y, defined only for integers x and y with x >= 0 and y > 0.)

Asin( x ) trigonometric inverse sine function, operating on an argument x that is in the range of −1.0 to 1.0, inclusive,

with an output value in the range of −π÷2 to π÷2, inclusive, in units of radians

Atan( x ) trigonometric invers tangent function, operating on an argument x that is any real number, with an output value

in the range of −π÷2 to π÷2, inclusive, in units of radians

y

Atan ; if x > 0

x

y

Atan +π ; if x < 0 & & y >= 0

x

y

Atan2( y, x ) =

Atan −π ; if x < 0 & & y < 0

x

π

+ ; if x == 0 & & y >= 0

π

− ; otherwise

Cos( x ) trigonometric cosine function operating on an argument x in units of radians

© ISO/IEC 2019 – All rights reserved 7

Floor( x ) largest integer less than or equal to x

Round( x ) = Sign( x ) * Floor(

...

INTERNATIONAL ISO/IEC

STANDARD 23090-2

First edition

2019-01

Information technology — Coded

representation of immersive media —

Part 2:

Omnidirectional media format

Technologies de l'information — Représentation codée de média

immersifs — Partie 2: Format de média omnidirectionnel

Reference number

©

ISO/IEC 2019

© ISO/IEC 2019

All rights reserved. Unless otherwise specified, or required in the context of its implementation, no part of this publication may

be reproduced or utilized otherwise in any form or by any means, electronic or mechanical, including photocopying, or posting

on the internet or an intranet, without prior written permission. Permission can be requested from either ISO at the address

below or ISO’s member body in the country of the requester.

ISO copyright office

CP 401 • Ch. de Blandonnet 8

CH-1214 Vernier, Geneva

Phone: +41 22 749 01 11

Fax: +41 22 749 09 47

Email: copyright@iso.org

Website: www.iso.org

Published in Switzerland

ii © ISO/IEC 2019 – All rights reserved

Contents

Foreword . vii

Introduction . viii

1 Scope . 1

2 Normative references . 1

3 Terms, definitions, abbreviated terms, and conventions . 2

3.1 Terms and definitions . 2

3.2 Abbreviated terms . 6

3.3 Arithmetic operators and mathematical functions . 7

3.4 Order of operation precedence . 8

3.5 Range notation . 9

3.6 Variables . 9

3.7 Processes . 9

4 Overview . 9

4.1 Organization of this document . 9

4.2 Overall architecture for omnidirectional media with projected video . 10

4.2.1 Overview . 10

4.2.2 Stitching, rotation, projection, and region-wise packing . 11

4.3 Overall architecture for omnidirectional media with fisheye video . 12

4.4 Conformance and interoperability . 13

4.4.1 General . 13

4.4.2 Media profiles . 14

4.4.3 Presentation profiles . 15

4.4.4 Summary of referenceable code points . 16

5 Omnidirectional video projection and region-wise packing . 19

5.1 Coordinate system . 19

5.2 Omnidirectional projection formats . 20

5.2.1 General . 20

5.2.2 Equirectangular projection for one sample location . 20

5.2.3 Cubemap projection for one sample location . 21

5.3 Conversion from the local coordinate axes to the global coordinate axes . 23

5.4 Region-wise packing formats . 24

5.4.1 General . 24

5.4.2 Conversion of one sample location for rectangular region-wise packing . 24

6 Fisheye omnidirectional video . 25

6.1 General . 25

6.2 FisheyeVideoEssentialInfoStruct syntax structure . 26

6.2.1 Syntax . 26

6.2.2 Semantics . 26

6.3 FisheyeVideoSupplementalInfoStruct syntax structure . 29

6.3.1 Syntax . 29

© ISO/IEC 2019 – All rights reserved iii

6.3.2 Semantics . 30

7 Omnidirectional media storage and metadata signalling in the ISOBMFF . 33

7.1 Generic extensions to the ISOBMFF . 33

7.1.1 Stereoscopic video track grouping . 33

7.1.2 Indication of track_group_id uniqueness . 34

7.1.3 Updated semantics of track_IDs of the track reference box . 34

7.1.4 Indication of a track not intended to be presented alone . 34

7.1.5 Timed metadata tracks . 34

7.1.6 Compatible scheme type box . 35

7.1.7 Multiple transformations for a single transformed media track . 35

7.1.8 The 'codecs' parameter for a transformed media track . 35

7.1.9 Track type box . 36

7.1.10 Clarifications on the stereo video box . 36

7.2 Generic extensions to ISO/IEC 14496-15 . 37

7.2.1 Alternative extraction source track grouping . 37

7.2.2 Tile base track association with coverage information box and timed metadata data track . 37

7.3 OMAF-specific extensions to the ISOBMFF . 37

7.3.1 Sync samples in timed metadata tracks . 37

7.4 OMAF-specific extensions to ISO/IEC 14496-15 . 37

7.4.1 Coverage information box in a tile base track . 37

7.5 Structures and semantics that are common for video tracks and image items . 38

7.5.1 Semantics of sample locations within a decoded picture . 38

7.5.2 Projection format structure . 41

7.5.3 Region-wise packing structure . 41

7.5.4 Rotation structure . 48

7.5.5 Content coverage structure . 48

7.5.6 Sphere region structure . 49

7.6 Restricted video schemes for omnidirectional video . 51

7.6.1 Scheme types. 51

7.6.2 Projected omnidirectional video box . 54

7.6.3 Fisheye omnidirectional video box . 55

7.6.4 Region-wise packing box . 55

7.6.5 Rotation box . 56

7.6.6 Coverage information box . 56

7.7 Timed metadata for sphere regions . 56

7.7.1 General . 56

7.7.2 Sample entry . 57

7.7.3 Sample format . 58

7.7.4 Initial viewing orientation . 58

7.7.5 Recommended viewport . 59

7.7.6 Timed text sphere location metadata . 60

7.8 Signalling of region-wise quality ranking . 61

7.8.1 General . 61

7.8.2 Spherical region-wise quality ranking . 61

7.8.3 2D region-wise quality ranking . 63

7.9 Storage of omnidirectional images . 65

7.9.1 General . 65

7.9.2 Frame packing item property . 65

7.9.3 Projection format item property . 65

7.9.4 Essential fisheye image item property. 66

7.9.5 Supplemental fisheye image item property . 67

7.9.6 Region-wise packing item property . 67

7.9.7 Rotation item property . 68

iv © ISO/IEC 2019 – All rights reserved

7.9.8 Coverage information item property. 68

7.9.9 Initial viewing orientation item property . 69

7.10 Storage of timed text for omnidirectional video . 69

7.10.1 General . 69

7.10.2 OMAF timed text configuration box . 70

7.10.3 IMSC1 tracks . 72

7.10.4 WebVTT tracks . 73

8 Omnidirectional media encapsulation and signalling in DASH . 73

8.1 Architecture of DASH delivery in OMAF . 73

8.2 Usage of DASH in OMAF . 74

8.2.1 General . 74

8.2.2 Signalling of stereoscopic frame packing . 74

8.2.3 Carriage of timed metadata . 74

8.3 DASH MPD descriptors for omnidirectional media . 75

8.3.1 XML namespace and schema . 75

8.3.2 Signalling of projection type information . 75

8.3.3 Signalling of region-wise packing type . 76

8.3.4 Signalling of content coverage . 76

8.3.5 Signalling of spherical region-wise quality ranking . 79

8.3.6 Signalling of 2D region-wise quality ranking . 84

8.3.7 Signalling of fisheye omnidirectional video . 88

9 Omnidirectional media encapsulation and signalling in MMT . 89

9.1 Architecture of MMT delivery in OMAF . 89

9.2 OMAF signalling in MPEG composition information . 90

9.3 VR application-specific MMT signalling . 90

9.3.1 General . 90

9.3.2 MMT signalling . 91

10 Media profiles . 103

10.1 Video profiles . 103

10.1.1 Overview . 103

10.1.2 HEVC-based viewport-independent OMAF video profile . 103

10.1.3 HEVC-based viewport-dependent OMAF video profile . 106

10.1.4 AVC-based viewport-dependent OMAF video profile . 109

10.2 Audio profiles . 111

10.2.1 Overview . 111

10.2.2 OMAF 3D audio baseline profile . 111

10.2.3 OMAF 2D audio legacy profile . 114

10.3 Image profiles . 118

10.3.1 Overview . 118

10.3.2 Common specifications for image profiles . 119

10.3.3 OMAF HEVC image profile . 120

10.3.4 OMAF legacy image profile . 121

10.4 Timed text profiles . 122

10.4.1 Overview . 122

10.4.2 OMAF IMSC1 timed text profile . 123

10.4.3 OMAF WebVTT timed text profile . 123

11 Presentation profiles . 124

11.1 OMAF viewport-independent baseline presentation profile . 124

11.1.1 General (informative) . 124

© ISO/IEC 2019 – All rights reserved v

11.1.2 ISO base media file format constraints . 124

11.2 OMAF viewport-dependent baseline presentation profile . 124

11.2.1 General . 124

11.2.2 ISO base media file format constraints . 124

Annex A (normative) OMAF DASH schema . 125

Annex B (normative) DASH integration of media profiles . 128

Annex C (normative) CMAF integration of media profiles . 134

Annex D (informative) Viewport-dependent omnidirectional video processing . 136

Annex E (informative) DASH MPD examples . 154

Annex F (informative) MMT signalling examples . 158

vi © ISO/IEC 2019 – All rights reserved

Foreword

ISO (the International Organization for Standardization) and IEC (the International Electrotechnical Commission) form

the specialized system for worldwide standardization. National bodies that are members of ISO or IEC participate in the

development of International Standards through technical committees established by the respective organization to deal

with particular fields of technical activity. ISO and IEC technical committees collaborate in fields of mutual interest. Other

international organizations, governmental and non-governmental, in liaison with ISO and IEC, also take part in the work.

In the field of information technology, ISO and IEC have established a joint technical committee, ISO/IEC JTC 1.

The procedures used to develop this document and those intended for its further maintenance are described in the ISO/IEC

Directives, Part 1. In particular the different approval criteria needed for the different types of document should be noted.

This document was drafted in accordance with the editorial rules of the ISO/IEC Directives, Part 2

(see www.iso.org/directives).

Attention is drawn to the possibility that some of the elements of this document may be the subject of patent rights. ISO

and IEC shall not be held responsible for identifying any or all such patent rights. Details of any patent rights identified

during the development of the document will be in the Introduction and/or on the ISO list of patent declarations received

(see www.iso.org/patents).

Any trade name used in this document is information given for the convenience of users and does not constitute an

endorsement.

For an explanation of the voluntary nature of standards, the meaning of ISO specific terms and expressions related to

conformity assessment, as well as information about ISO's adherence to the World Trade Organization (WTO) principles

in the Technical Barriers to Trade (TBT) see: www.iso.org/iso/foreword.html.

This document was prepared by Technical Committee ISO/IEC JTC 1, Information technology, Subcommittee SC 29,

Coding of audio, picture, multimedia and hypermedia information.

A list of all parts in the ISO/IEC 23090 series can be found on the ISO website.

Any feedback or questions on this document should be directed to the user’s national standards body. A complete listing

of these bodies can be found at www.iso.org/members.html.

© ISO/IEC 2019 – All rights reserved vii

Introduction

When omnidirectional media content is consumed with a head-mounted display and headphones, only the parts of the

media that correspond to the user's viewing orientation are rendered, as if the user were in the spot where and when the

media was captured. One of the most popular forms of omnidirectional media applications is omnidirectional video, also

known as 360° video. Omnidirectional video is typically captured by multiple cameras that cover up to 360° of the scene.

Compared to traditional media application formats, the end-to-end technology for omnidirectional video (from capture to

playback) is more easily fragmented due to various capturing and video projection technologies. From the capture side,

there exist many different types of cameras capable of capturing 360° video, and on the playback side there are many

different devices that are able to playback 360° video with different processing capabilities. To avoid fragmentation of

omnidirectional media content and devices, a standardized format for omnidirectional media applications is specified in

this document.

This document defines a media format that enables omnidirectional media applications, focusing on 360° video, images,

and audio, as well as associated timed text. What is specified in this document includes (but is not limited to):

1) a coordinate system that consists of a unit sphere and three coordinate axes, namely the X (back-to-front) axis, the Y

(lateral, side-to-side) axis, and the Z (vertical, up) axis,

2) projection and rectangular region-wise packing methods that may be used for conversion of a spherical video sequence

or image into a two-dimensional rectangular video sequence or image, respectively,

3) storage of omnidirectional media and the associated metadata using the ISO base media file format (ISOBMFF) as

specified in ISO/IEC 14496-12,

4) encapsulation, signalling, and streaming of omnidirectional media in a media streaming system, e.g., dynamic

adaptive streaming over HTTP (DASH) as specified in ISO/IEC 23009-1 or MPEG media transport (MMT) as

specified in ISO/IEC 23008-1, and

5) media profiles and presentation profiles that provide interoperable and conformance points for media codecs as well

as media coding and encapsulation configurations that may be used for compression, streaming, and playback of the

omnidirectional media content.

viii © ISO/IEC 2019 – All rights reserved

INTERNATIONAL STANDARD ISO/IEC 23090-2:2019(E)

Information technology — Coded representation of immersive

media —

Part2:

Omnidirectional media format

1 Scope

This document specifies the omnidirectional media format for coding, storage, delivery, and rendering of omnidirectional

media, including video, images, audio, and timed text.

In an OMAF player the user's viewing perspective is from the centre of the sphere looking outward towards the inside

surface of the sphere.

NOTE 1 In this document, only 3 degrees of freedom (3DOF) is supported. In other words, purely translational movement of the user

does not result in different omnidirectional media being rendered to the user. For 3DOF support with stereoscopic video, when the user

rolls his/her head, there could be a stereoscopic rendering issue.

NOTE 2 Omnidirectional video could contain graphics elements generated by computer graphics but encoded as video.

2 Normative references

The following documents are referred to in the text in such a way that some or all of their content constitutes requirements

of this document. For dated references, only the edition cited applies. For undated references, the latest edition of the

referenced document (including any amendments) applies.

ISO/IEC 10918-1, Information technology — Digital compression and coding of continuous-tone still images — Part 1:

Requirements and guidelines

ISO/IEC 14496-1, Infomation technology — Coding of audio-visual objects — Part 1: Systems

ISO/IEC 14496-3:2009, Information technology — Coding of audio-visual objects — Part 3: Audio

ISO/IEC 14496-10:2014, Information technology — Coding of audio-visual objects — Part 10: Advanced video coding

ISO/IEC 14496-12, Information technology — Coding of audio-visual objects — Part 12: ISO base media file format

ISO/IEC 14496-14, Information technology — Coding of audio-visual objects — Part 14, MP4 file format

ISO/IEC 14496-15:2017, Information technology — Coding of audio-visual objects — Part 15, Carriage of network

abstraction layer (NAL) unit structured video in the ISO base media file format

ISO/IEC 14496-30:2018, Information technology — Coding of audio-visual objects — Part 30: Timed text and other

visual overlays in ISO base media file format

ISO/IEC 23000-19:2018, Information technology — Multimedia application format (MPEG-A) — Part 19: Common

media application format (CMAF) for segmented media

ISO/IEC 23003-4:2015, Information technology — MPEG audio technologies — Part 4: Dynamic range control

ISO/IEC 23008-1:2017, Information technology — High efficiency coding and media delivery in heterogeneous

environments — Part 1: MPEG media transport (MMT)

ISO/IEC 23008-2:2017, Information technology — High efficiency coding and media delivery in heterogeneous

environments — Part 2: High efficiency video coding

ISO/IEC 23008-3:2015, Information technology — High efficiency coding and media delivery in heterogeneous

environments — Part 3: 3D audio

© ISO/IEC 2019 – All rights reserved 1

ISO/IEC 23008-12, Information technology — High efficiency coding and media delivery in heterogeneous

environments — Part 12: Image file format

ISO/IEC 23009-1, Information technology — Dynamic adaptive streaming over HTTP (DASH) — Part 1: Media

presentation description and segment formats

ISO/IEC 23091-2 , Information technology — Coding-independent code points — Part 2: Video

ISO/IEC 23091-3, Information technology — Coding-independent code points — Part 3: Audio

W3C Recommendation, TTML profiles for Internet media subtitles and captions 1.0 (IMSC1)

WebVTT: The web video text tracks format, W3C (Working Draft, 08 August 2017)

W3C Recommendation, XML schema part 1: Structures

W3C Recommendation, XML schema part 2: Datatypes

IETF BCP 47, Tags for Identifying Languages

IETF RFC 6381, MIME Codecs and Profiles

3 Terms, definitions, abbreviated terms, and conventions

3.1 Terms and definitions

For the purposes of this document, the terms and definitions in ISO/IEC 14496-12, ISO/IEC 23008-12, ISO/IEC 23009-1

and the following apply. If terms defined in ISO/IEC 14496-12, ISO/IEC 23008-12 and ISO/IEC 23009-1 are also defined

in this document, the definitions in this document are applicable.

NOTE In particular, the terms coded image, coded image item, derived image, derived image item, image item, reconstructed image,

and source image item are defined in ISO/IEC 23008-12.

The terminological databases for use in standardization maintained by ISO and IEC at the following addresses:

− IEC Electropedia: available at http://www.electropedia.org/

− ISO Online browsing platform: available at http://www.iso.org/obp

3.1.1

azimuth

first of the two sphere coordinates describing the location of a point on the sphere

Note 1 to entry: Azimuth and elevation are specified in subclause 5.1.

3.1.2

azimuth circle

circle on the sphere connecting all points with the same azimuth value

Note 1 to entry: An azimuth circle is always a great circle (3.1.17).

3.1.3

circular image

image captured with a fisheye lens (3.1.14)

3.1.4

closed scheme type

scheme type (3.1.35) that clearly specifies which transformations are allowed and does not allow future extensions

Under preparation. Stage at time of FDIS ballot: ISO/IEC DIS 23091-2, 40.99.

2 © ISO/IEC 2019 – All rights reserved

3.1.5

composition-aligned sample

sample in a track that is associated with another track, the sample has the same composition time as a particular sample in

the another track, or, when a sample with the same composition time is not available in the another track, the closest

preceding composition time relative to that of a particular sample in the another track

3.1.6

constituent picture

such part of a spatially frame-packed stereoscopic picture that corresponds to one view, or a picture itself when frame

packing is not in use or the temporal interleaving frame packing arrangement is in use

3.1.7

content coverage

one or more sphere regions that are covered by the content represented by the track or by an image item

3.1.8

elevation

second of the two sphere coordinates describing the location of a point on the sphere

Note 1 to entry: Azimuth and elevation are specified in subclause 5.1.

3.1.9

elevation circle

circle on the sphere connecting all points with the same elevation value

Note 1 to entry: When the elevation is zero, an elevation circle is also a great circle (3.1.17). This coincides with the equator on Earth.

3.1.10

extractor track

track that has untransformed sample entry type (3.1.44) equal to 'hvc2', 'avc2', or 'avc4' and contains one or more

'scal' track references

3.1.11

field of view

extent of the observable world in captured/recorded content or in a physical display device

3.1.12

file decoder

collective term for file/segment decapsulation and decoding of video, audio or image bitstreams

3.1.13

file decoding process

process specified as a part of a media profile specification that takes as input a set of ISOBMFF tracks or items and derives

either of the following:

− decoded pictures or audio samples, and rendering metadata for them;

− a fully rendered audio scene in the reference system

3.1.14

fisheye lens

wide-angle camera lens that usually captures an approximately hemispherical field of view (3.1.11) and projects it as a

circular image (3.1.3)

3.1.15

fisheye video

video captured by fisheye lenses (3.1.14)

3.1.16

global coordinate axes

coordinate axes that are associated with audio, video, and images representing the same acquisition position and intended

to be rendered together

Note 1 to entry: Coordinate axes are specified in subclause 5.1.

© ISO/IEC 2019 – All rights reserved 3

Note 2 to entry: The origin of the global coordinate axes is usually the same as the centre point of a device or rig used for omnidirectional

audio/video acquisition as well as the position of the observer's head in the three-dimensional space in which the audio and video tracks

are located.

Note 3 to entry: In the absence of the initial viewing orientation metadata (see subclause 7.7.4 for tracks or subclause 7.9.9 for image

items), the initial viewing orientation should be inferred to be equal to (0, 0, 0) for (centre_azimuth, centre_elevation,

centre_tilt) relative to the global coordinate axes.

3.1.17

great circle

intersection of the sphere and a plane that passes through the centre point of the sphere

Note 1 to entry: A great circle is also known as an orthodrome or Riemannian circle.

Note 2 to entry: The centre of the sphere and the centre of a great circle are co-located.

3.1.18

guard band

area in a packed picture (3.1.23) that is not rendered but may be used to improve the rendered part of the packed picture to

avoid or mitigate visual artifacts such as seams

Note to entry: Guard bands are associated with packed regions (3.1.24) as described in subclause 7.5.3.

3.1.19

local coordinate axes

coordinate axes obtained after applying rotation to the global coordinate axes (3.1.16)

3.1.20

OMAF player

collective term for

− file/segment reception or file access;

− file/segment decapsulation;

− decoding of audio, video, image, or timed text bitstreams;

− rendering of audio, images, or timed text; and

− viewport selection

3.1.21

omnidirectional media

media such as image or video and its associated audio that enable rendering according to the user's viewing orientation

(3.1.45), if consumed with a head-mounted device, or according to user's desired viewport (3.1.46), otherwise, as if the

user was in the spot where and when the media was captured

3.1.22

open-ended scheme type

scheme type (3.1.35) that allows future extensions

3.1.23

packed picture

picture that is represented as a coded picture in the coded video bitstream

Note 1 to entry: A packed picture may result from region-wise packing (3.1.31) of a projected picture (3.1.25).

3.1.24

packed region

region in a packed picture (3.1.24) that is mapped to a projected region (3.1.26) as specified by the region-wise packing

(3.1.31) signalling

3.1.25

projected picture

picture that has a representation format specified by an omnidirectional video projection format

Note 1 to entry: Omnidirectional projection formats are specified in subclause 5.2.

4 © ISO/IEC 2019 – All rights reserved

3.1.26

projected region

region in a projected picture (3.1.25) that is mapped to a packed region (3.1.24) as specified by the region-wise packing

signalling

3.1.27

projection

inverse of the process by which the samples of a projected picture (3.1.25) are mapped to a set of positions identified by a

set of azimuth and elevation coordinates on a unit sphere

3.1.28

quality ranking region

region that is associated with a quality ranking value and is specified relative to a decoded picture or a sphere

3.1.29

quality ranking 2D region

quality ranking region (3.1.28) that is specified relative to a decoded picture

3.1.30

quality ranking sphere region

quality ranking region (3.1.28) that is specified relative to a sphere

3.1.31

region-wise packing

inverse of the process of transformation, resizing, and relocating of packed regions (3.1.24) of a packed picture (3.1.23) to

remap to projected regions (3.1.26) of a projected picture (3.1.25)

3.1.32

rendering

process of generating audio-visual content for playback from the decoded audio-visual data according to the user's viewing

orientation (3.1.45), if consumed with a head-mounted device, or according to user's desired viewport (3.1.46), otherwise

3.1.33

sample

all the data associated with a single time or single element in one of the three sample arrays that represent a picture

Note 1 to entry: When the term sample is used in the context of a track, it refers to all the data associated with a single time of that track,

where a time is either a decoding time or a composition time. When the term sample is used in the context of a picture, e.g., in the phrase

"luma sample", it refers to a single element in one of the three sample arrays that represent the picture.

3.1.34

sample entry type

four-character code that is either the value of the format field of a SampleEntry directly contained in

SampleDescriptionBox or the data_format value of an instance of OriginalFormatBox

3.1.35

scheme type

type that parameterizes an encrypted, restricted, or incomplete media track

3.1.36

sphere coordinates

azimuth (ϕ) and elevation (θ) that identify a location of a point on the unit sphere

3.1.37

sphere region

region on a sphere, specified either by four great circles (3.1.17) or by two azimuth circles (3.1.2) and two elevation circles

(3.1.9), or such a region on the rotated sphere after applying certain amount of yaw, pitch, and roll rotations

3.1.38

sub-picture

picture that represents a spatial subset of the original video content, which has been split into spatial subsets before video

encoding at the content production side

© ISO/IEC 2019 – All rights reserved 5

3.1.39

sub-picture bitstream

bitstream that represents a spatial subset of the original video content, which has been split into spatial subsets before video

encoding at the content production side

3.1.40

sub-picture track

track that represents a sub-picture bitstream (3.1.39)

3.1.41

tilt angle

angle indicating the amount of tilt of a sphere region (3.1.37), measured as the amount of rotation of the sphere region

along the axis originating from the sphere origin passing through the centre point of the sphere region, where the angle

value increases clockwise when looking from the origin towards the positive end of the axis

3.1.42

time-parallel sample

sample in a track that is associated with another track, and either has the same decoding time as a particular sample in the

other track, or, when a sample with the same decoding time in the other track is not available, the closest preceding decoding

time relative to that of a particular sample in the other track

3.1.43

track sample entry type

sample entry type (3.1.34) of a SampleEntry directly contained in SampleDescriptionBox

3.1.44

untransformed sample entry type

track sample entry type (3.1.43) that would apply if no transformations had been performed to a transformed media track

3.1.45

viewing orientation

triplet of azimuth, elevation, and tilt angle characterizing the orientation that a user is consuming the audio-visual content;

in case of image or video, characterizing the orientation of the viewport (3.1.46)

3.1.46

viewport

region of omnidirectional image or video suitable for display and viewing by the user

3.2 Abbreviated terms

2D two-dimensional

CICP coding-independent code points (specified in ISO/IEC 23091-1, 23091-2, and 23091-3)

CMAF common media application format (specified in ISO/IEC 23000-19)

DASH MPEG dynamic adaptive streaming over HTTP (specified in ISO/IEC 23009-1)

ERP equirectangular projection

FOV field of view

ISOBMFF ISO base media file format (specified in ISO/IEC 14496-12)

HEVC high efficiency video coding (specified in ISO/IEC 23008-2)

HMD head-mounted display

HOA higher-order ambisonics

6 © ISO/IEC 2019 – All rights reserved

MCTS motion-constrained tile set

MMT MPEG media transport (specified in ISO/IEC 23008-1)

OMAF omnidirectional media format (specified in ISO/IEC 23090-2)

SRD spatial relationship description

URN uniform resource name

VR virtual reality

3.3 Arithmetic operators and mathematical functions

+ addition

− subtraction (as a two-argument operator) or negation (as a unary prefix operator)

* multiplication, including matrix multiplication

y

x exponentiation, specifies x to the power of y

(In other contexts, such notation is used for superscripting not intended for interpretation as exponentiation.)

/ integer division with truncation of the result toward zero

EXAMPLE 7 / 4 and −7 / −4 are truncated to 1 and −7 / 4 and 7 / −4 are truncated to −1.

÷ denotes division in mathematical equations where no truncation or rounding is intended

x

denotes division in mathematical equations where no truncation or rounding is intended

y

y

f (i)

summation of f( i ) with i taking all integer values from x up to and including y

∑

i=x

x % y modulus

(Remainder of x divided by y, defined only for integers x and y with x >= 0 and y > 0.)

Asin( x ) trigonometric inverse sine function, operating on an argument x that is in the range of −1.0 to 1.0, inclusive,

with an output value in the range of −π÷2 to π÷2, inclusive, in units of radians

Atan( x ) trigonometric invers tangent function, operating on an argument x that is any real number, with an output value

in the range of −π÷2 to π÷2, inclusive, in units of radians

y

Atan ; if x > 0

x

y

Atan +π ; if x < 0 & & y >= 0

x

y

Atan2( y, x ) =

Atan −π ; if x < 0 & & y < 0

x

π

+ ; if x == 0 & & y >= 0

π

− ; otherwise

Cos( x ) trigonometric cosine function operating on an argument x in units of radians

© ISO/IEC 2019 – All rights reserved 7

Floor( x ) largest integer less than or equal to x

Round( x ) = Sign( x ) * Floor(

...

Questions, Comments and Discussion

Ask us and Technical Secretary will try to provide an answer. You can facilitate discussion about the standard in here.

Loading comments...